Public announcements in early April 2026 describe JALI Research showcasing real-time facial animation and automated lip sync as part of an interactive AI character activation at the HP booth during NAB Show 2026 at the Las Vegas Convention Center in Las Vegas.

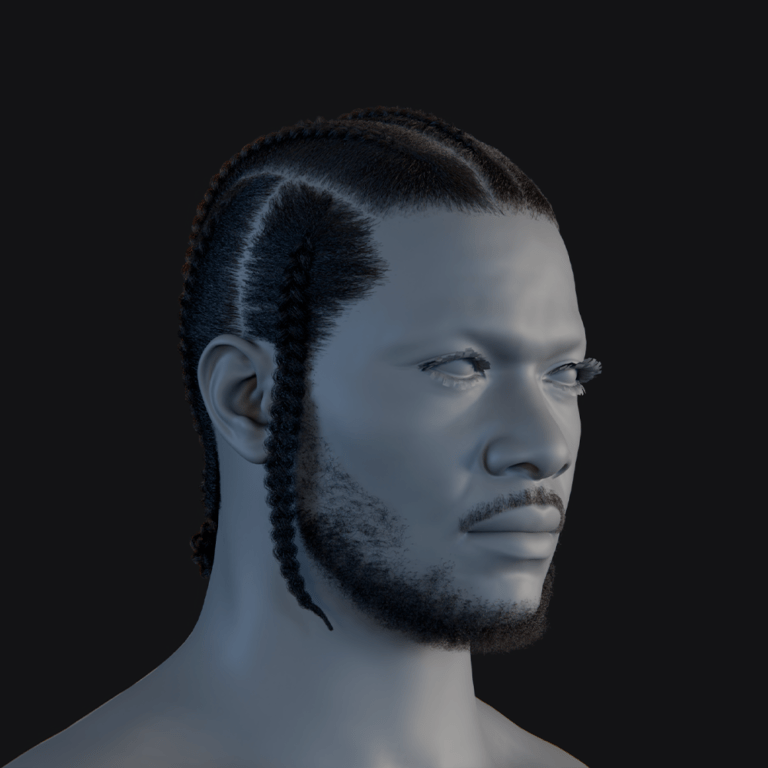

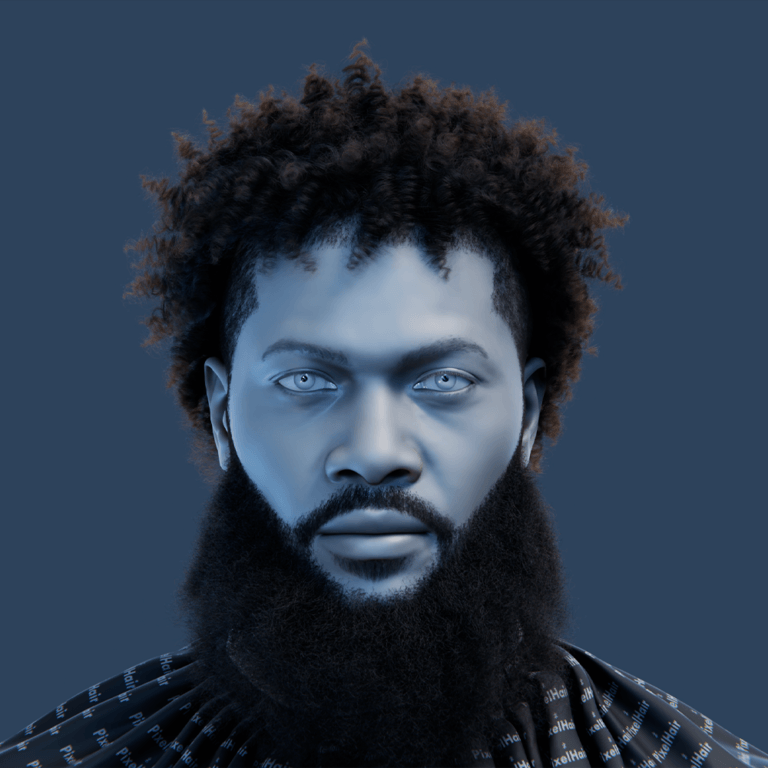

The centrepiece of the HP-booth activation is described as an interactive meet-and-greet with “Ruby,” a character developed by Immersive Enterprise Laboratories, with JALI driving facial performance (facial animation, lip sync, and an idle/performance layer) inside a connected, real-time showcase workflow.

Because the 2026 NAB Show dates are April 18–22 (with exhibits/show floor April 19–22), many “what was demoed” details available as of 14 April 2026 come from pre-show media alerts and coverage describing what will be shown on the booth floor, rather than post-show reporting.

What is JALI real-time facial animation and lip sync technology

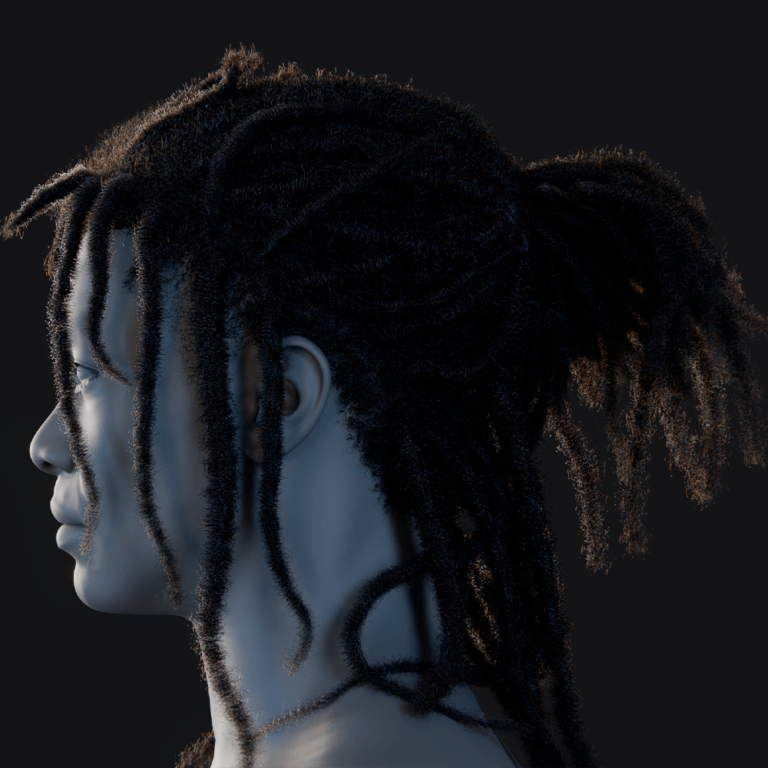

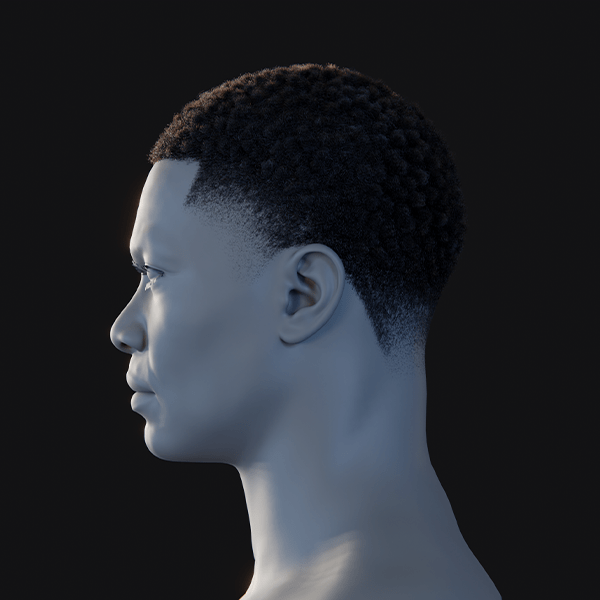

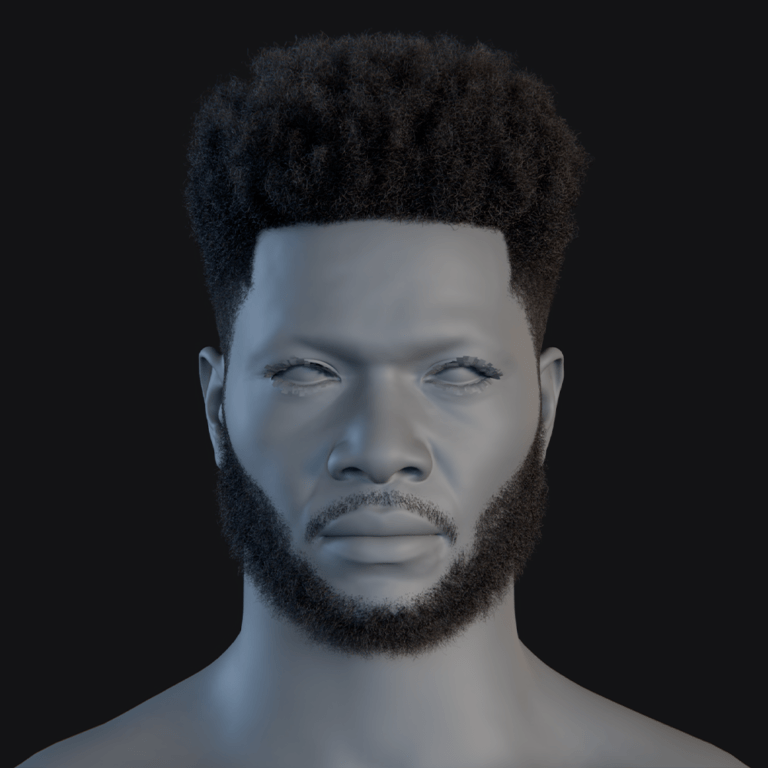

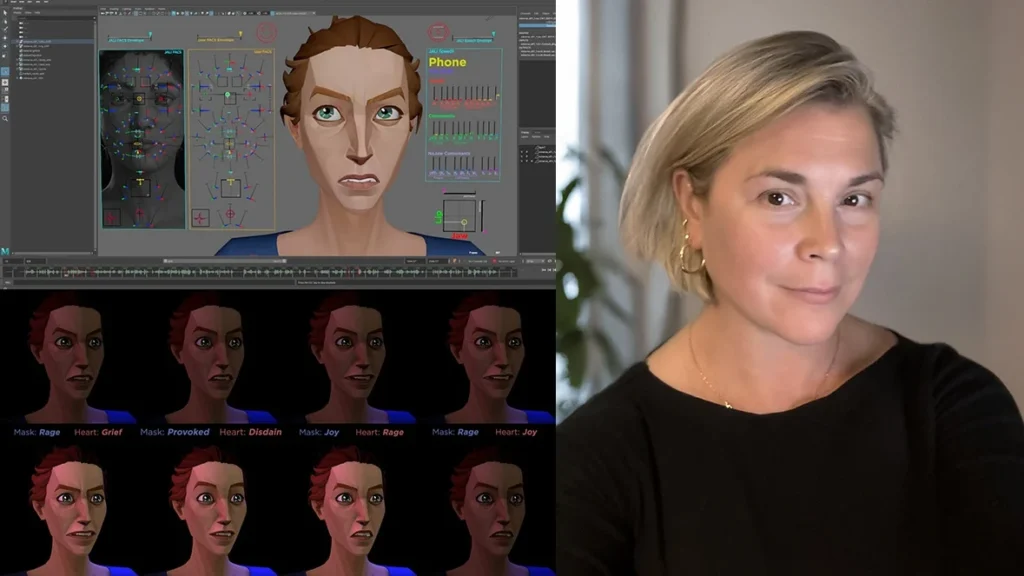

JALI positions its technology as an animator-oriented system for generating lip sync and facial performance quickly from speech, including pipelines that can work from audio plus text (or text-to-speech inputs). Its product descriptions emphasise language-specific lip synchronisation, editable animation curves, and additional facial performance controls (eyes, brows, asymmetry, head/neck motion) rather than mouth-only visemes.

In the research lineage behind the product, the original “JALI” approach is described as a system that takes an input audio soundtrack plus a transcript and automatically produces expressive lip-synchronised facial animation that remains editable for further artistic refinement—explicitly aiming for quality comparable to performance capture and professional animator output.

On the product side, JALI frames “real-time” not only as runtime responsiveness, but also as production practicality: rapid generation (“seconds”), batch automation via scripting, and pipeline integration (export and engine ingestion) as critical enablers for interactive characters and high-volume dialogue production.

Automated lip sync vs animator control: what makes JALI different

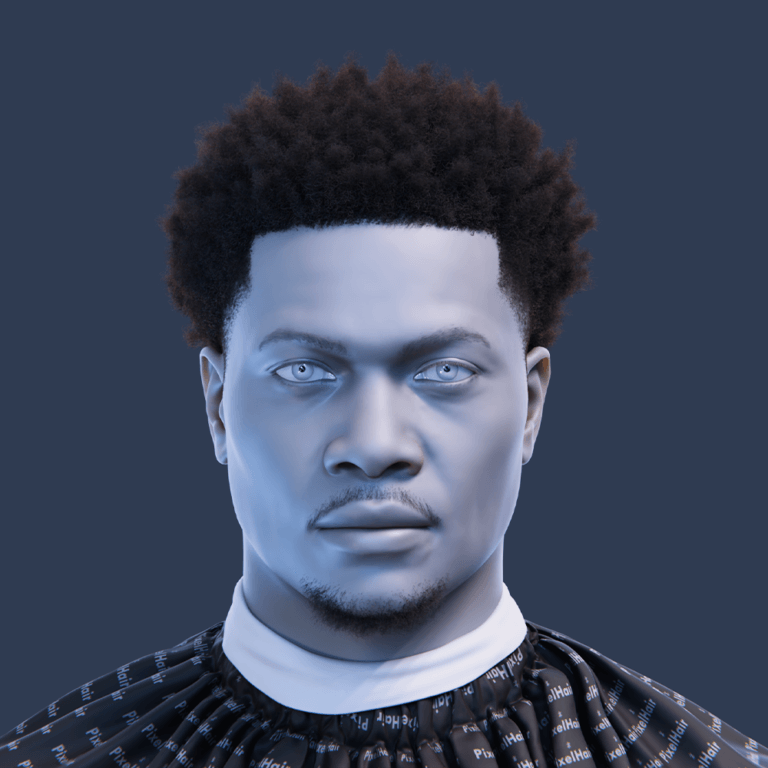

A recurring theme in both JALI’s research materials and product messaging is “animator-centric” automation: the system is intended to accelerate first-pass speech performance while keeping the result lightweight and editable in standard workflows.

In the SIGGRAPH 2016 technical paper, JALI’s procedural lip-synchronisation pipeline is presented as a multi-stage method (input alignment → animation/co-articulation → output curves) and explicitly describes producing sparse keyframes/curves designed to be plausible pre-edit and simple to refine.

In a later SIGGRAPH 2020 talk associated with large-scale game dialogue, the workflow is summarised as “largely automatic but remains under animator control,” using audio plus tagged text transcripts (including directorial tags) to manage speech, non-verbal “paralingual” motion (eyes/brows/neck), and emotion integration—an explicit statement of balancing automation with controllability.

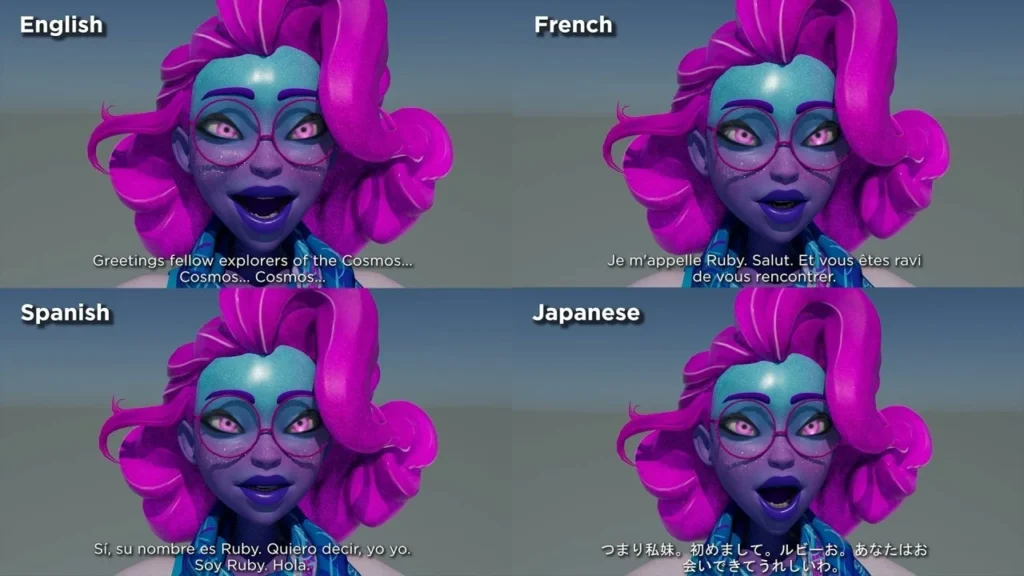

Multilingual facial animation and speech performance with JALI tools

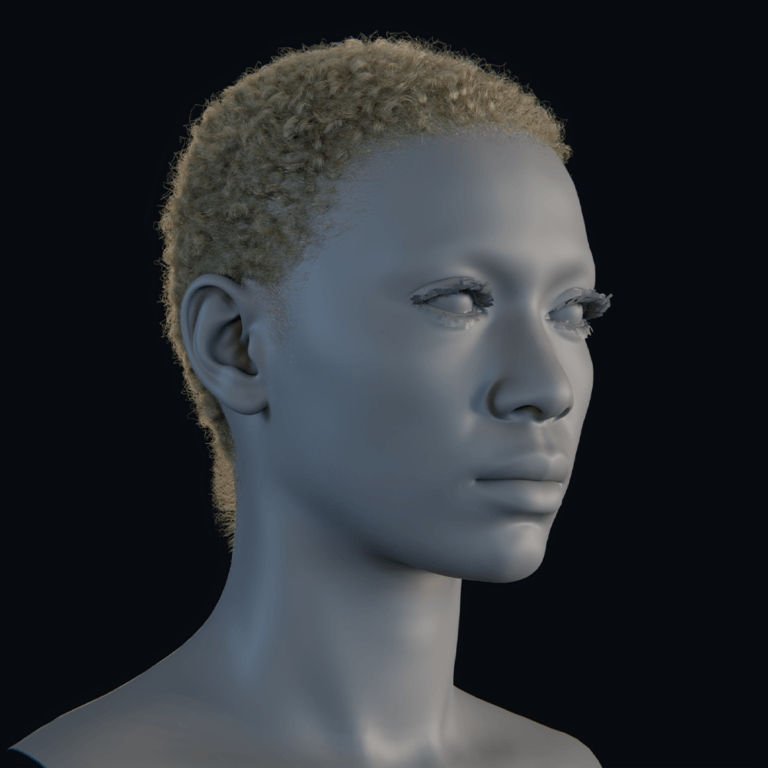

JALI’s current product “tech specs” list unique language models covering (at minimum) English, French, Russian, Polish, Portuguese (Brazil), Japanese, Mandarin, German, Italian, Spanish, Korean, and Dutch.

The SIGGRAPH 2020 talk on multilingual speech animation describes producing expressive speech animation “in ten languages,” including mixed-language sentences, and identifies a workflow combining audio performance + tagged transcripts + animator-centric editing; it also lists the ten languages in the described production context (English, Spanish, French, Polish, Russian, Italian, Brazilian Portuguese, Mandarin, Japanese, German).

This multilingual focus is also reflected in NAB 2026 promotional materials, which position JALI as delivering “multilingual lip sync at scale” while remaining artist-driven and production-ready—important context for why a trade-show interaction can feel believable across diverse attendee speech patterns and localisation requirements.

NAB Show 2026 JALI demo details at the HP booth in Las Vegas

JALI’s NAB 2026 media alert states it will “showcase its real-time facial animation and lip sync technology at NAB Show 2026” at the Las Vegas Convention Center, specifically “at the HP Booth (#N2561),” positioning the activation as an on-floor demonstration of interactive AI character performance within an IEL-developed connected production workflow.

Multiple third-party reprints/recaps (industry press) repeat the same core logistical claim: the JALI-powered interactive AI character performance is presented as part of the HP booth experience at NAB Show 2026 in Las Vegas, with booth number N2561 attached to the announcement.

JALI’s own events listing for NAB Show 2026 likewise directs attendees to “visit the HP booth” to see facial animation “in action” within IEL’s live animation production workflow and to experience “The Ruby Interactive Experience.”

Interactive AI character “Ruby” demo: what viewers saw at NAB 2026

The activation described for the HP booth is “The Ruby Interactive Experience,” framed as a live, AI-driven interactive meet-and-greet where attendees converse with Ruby as JALI drives her facial animation, lip sync, and a body idle/performance layer intended to preserve character consistency across interactions.

Press materials characterise Ruby as a “transmedia character” developed by IEL, and describe the demo as intentionally story-forward: JALI’s toolset is presented as contributing to narrative consistency so that whether a moment is scripted or live, the character’s dialogue and facial performance remain coherent with her established personality and visual expression.

The same materials also explicitly acknowledge the “interactive AI” stack around Ruby: references are made to backend large language models (LLMs) and retrieval-augmented generation (RAG) models as components, while emphasising that facial animation and a dependable performance system are what make unscripted interactions feel intentionally directed rather than accidental.

Connected production workflow: how IEL and JALI powered the NAB showcase

The announced NAB 2026 activation is positioned as a “connected production workflow” where capture, creation, animation, broadcast, and audience interaction are integrated into one continuous system, rather than a linear sequence of handoffs. In that framing, JALI is described as providing a “3D performance backbone” beneath any AI-driven adjustments so the character remains consistent and controllable across both production and live real-time interaction.

Industry coverage quotes Daniel Urbach describing IEL’s role as designing an overall environment where “story, performance and interaction operate together in real time,” and positioning JALI’s role as enabling expressive facial animation that supports live character interaction.

Context for this workflow approach also appears in IEL’s broader public communications about live, integrated animation systems. A February 2026 overview of IEL’s “Science of Animation” documentary describes a workflow designed to avoid delayed feedback loops by allowing story, performance, and environment to evolve together, with Ruby used as a host character to guide visitors through a live pipeline experience—evidence that the “integrated workflow” framing is central to IEL’s public narrative, not just the NAB booth messaging.

JALI press release recap: interactive AI character experience at NAB 2026

The JALI media alert (10 April 2026) is the primary source for the announced NAB activation, stating: (1) the location (Las Vegas Convention Center), (2) the HP booth as presentation venue (with booth number N2561), and (3) the purpose: demonstrating real-time facial animation and lip sync powering interactive AI character performance within IEL’s connected workflow.

The release’s technical positioning is explicit: Ruby is presented as “built for Unreal Engine,” with JALI framing its role as the facial performance layer that supports both scripted and live interaction while integrating into a wider system that includes AI conversational components.

A key accuracy nuance: while the press materials consistently cite booth “#N2561” for the activation, at least one reproduced version of the release text contains a separate line that lists “#N2651” for media briefings (a likely typographical inconsistency). For error-avoidance, the most cross-validated booth reference across sources is #N2561.

Real-time engine integration and production pipelines

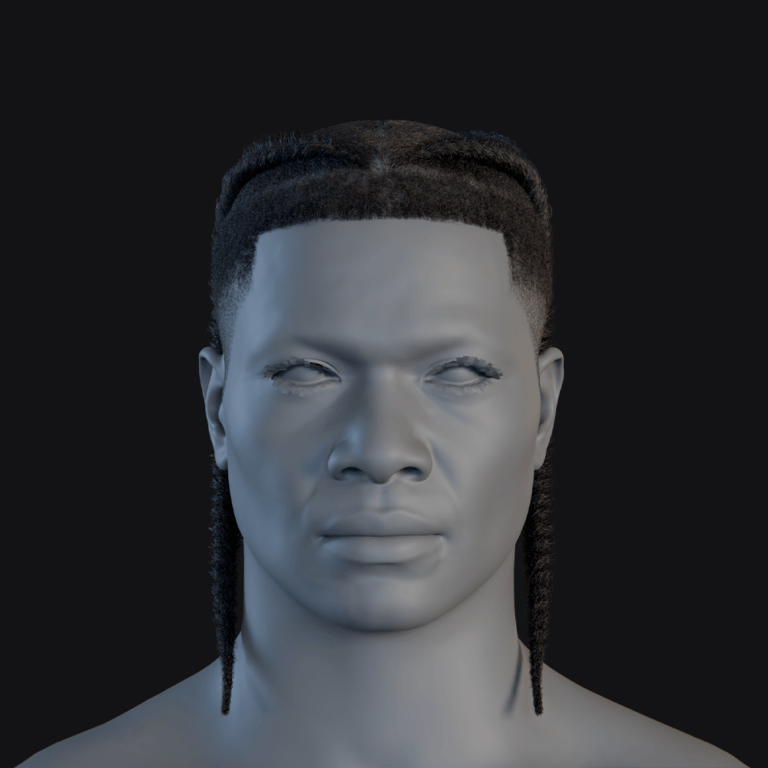

JALI’s published platform support includes plug-ins for Unreal Engine (listed as 5.0–5.6) and native plug-in support for Autodesk Maya versions (Windows), alongside command-line and C++ library integration options—indicating multiple paths for studios to embed JALI-generated facial performance into real-time engines and production pipelines.

JALI’s site also states that animation authored in Maya can be integrated into Unreal Engine (or other engines) using the JALI command line interface, reinforcing a workflow where an animator- and rig-friendly authoring environment can feed a real-time runtime (engine) without a complete retooling of facial rigs and controls.

For the specific NAB 2026 activation, the materials state that Ruby’s interactive system is “built for Unreal Engine” and is the outcome of an ongoing R&D partnership between JALI and IEL—suggesting that the demo is not merely an offline animation playback, but a real-time character system embedded in an engine-driven interactive stack.

How real-time facial animation is used in virtual production pipelines

In widely used virtual production and real-time previs pipelines, facial performance data often needs to be available immediately inside the engine, whether driven by capture streams (e.g., Live Link-style data) or procedural/audio-driven systems. Unreal Engine documentation and explainers describe real-time facial performance capture tools and workflows that push facial animation data into the engine for immediate playback and iteration, reflecting how “real-time” reduces the delay between performance and on-screen result.

IEL’s public description of its integrated live pipeline approach aligns with this virtual production mindset: in the “Science of Animation” live experience (documented in February 2026), the workflow is described as a unified environment combining real-time worlds built in Unreal Engine with live performance capture and facial animation driven by speech/emotion—illustrating how engine-based pipelines increasingly treat facial performance as a live, iterative ingredient rather than a late-stage deliverable.

In this context, the NAB 2026 Ruby demo can be understood as a trade-show-scale expression of the same virtual production principle: the audience engages the character, the system responds conversationally, and the facial performance must remain coherent at interactive speeds while preserving animator-defined character identity and performance constraints.

Use cases for JALI facial animation in games, film, and real-time media

JALI’s press materials position the toolset as applicable across feature animation, AAA game production, and immersive media—framing the NAB activation as one demonstration point within a broader production reality: scalable dialogue, consistent character performance, and multi-language delivery are persistent constraints across these industries.

JALI’s product messaging also explicitly names real-time and interactive applications (hero characters and NPCs, digital assistants, VR training, interactive chatbots), tying the technology’s value to believable facial performance in both production and “in real time when using text-to-speech.”

In research and large-scale production contexts, JALI-associated workflows have been discussed as supporting multilingual speech animation at significant scale while remaining editable and animator-centric—an alignment with dialogue-heavy games and localisation-intensive content pipelines.

Real-time facial animation for conversational AI avatars and digital humans

Conversational digital humans typically require a full stack: intelligence (LLMs or similar), speech (ASR/TTS), and embodiment (timed facial animation, lip sync, eye behaviour, and expressive nuance). The NAB 2026 Ruby messaging explicitly references this multi-part system by naming LLM and RAG components while emphasising that a robust animation/performance layer is essential for believable unscripted interaction.

Industry/platform narratives around “digital humans” similarly emphasise that intelligence alone is insufficient: realistic, dynamic facial animation and accurate lip sync are repeatedly positioned as core requirements for lifelike conversational avatars.

Within JALI’s own product framing, this is translated into practical authoring needs: quickly generated (but editable) facial performance that can operate at interactive speeds and remain consistent across contexts—exactly the problem space showcased by an on-floor, real-time meet-and-greet like Ruby.

Best practices for believable real-time facial animation on AI characters

Believability in interactive facial animation is usually constrained less by polygon count and more by timing, articulation rules, and the consistency of expressive intent. The practices below reflect the most repeated technical principles in the cited JALI research lineage and in broader real-time character pipeline guidance.

Accurate phonetic timing remains foundational. The SIGGRAPH 2016 JALI paper describes a pipeline where transcript + audio alignment feeds phoneme timing, which then drives viseme selection and curve generation—highlighting why transcription/phonetic alignment quality directly affects downstream realism.

Co-articulation must be treated as a first-class problem, not an afterthought. The same paper details why naive “one phoneme → one viseme” mapping produces unrealistic results and describes the need for co-articulation rules so neighbouring sounds blend in a way that matches how speech is physically produced and perceived.

Design for editability, because interactive doesn’t mean uncontrolled. JALI’s published goal is automation that remains artist-editable (sparse curves; animator-centric workflow), and its later multilingual workflow summary again stresses “largely automatic but remains under animator control”—a principle that applies directly to interactive characters, where live output still needs guardrails.

Add “paralinguals” (eyes, brows, blinks, neck) to avoid a mouth-only puppet effect. The SIGGRAPH 2020 talk abstract and figures describe augmenting speech curves with eye/brow/neck motion and integrating emotional content without breaking articulation constraints—supporting the idea that believable conversation requires whole-face behaviour.

Treat emotion as layered and constrained, not a global override. The SIGGRAPH 2020 summary describes integrating a character’s emotional and idling repertoire while ensuring emotion overlays do not conflict with precise mouth shapes needed for specific phonemes (e.g., smiles not overriding puckers).

Prioritise speech–face synchrony and natural timing, because humans detect mismatches quickly. Industry discussions of expressive avatars repeatedly emphasise that small timing errors between speech and facial movement break trust and draw attention to the technology rather than the character.

Build an intentional idle/performance baseline so the character is never “dead” between responses. The NAB Ruby activation description explicitly includes a “body idle performance system,” consistent with a broader best practice: interactive characters must remain alive during listening, thinking, and turn-taking, not only while speaking.

NAB Show 2026 dates and show floor schedule for character tech demos

NAB Show 2026 is listed as April 18–22, 2026, at the Las Vegas Convention Center, spanning Central, West, and North halls; official pages also specify that exhibits/show floor run April 19–22.

Official “Plan Your Show” information lists show floor hours as: Sunday, April 19 (10:00–18:00), Monday, April 20 (09:00–18:00), Tuesday, April 21 (09:00–18:00), and Wednesday, April 22 (09:00–14:00).

Because character technology demos are typically experienced on the show floor (booth activations) rather than in conference-only spaces, these exhibit hours are the most reliable public schedule frame for when on-booth interactive character demos can be visited.

Where to find JALI at NAB Show 2026: location, booth, and demo times

Public-facing announcements place the JALI-powered Ruby activation at the HP booth, repeatedly cited as booth #N2561 at the Las Vegas Convention Center.

JALI’s own event listing for NAB Show 2026 frames attendance in terms of meeting its CEO and seeing facial animation “in action” within IEL’s live animation production workflow at the HP booth, specifically naming “The Ruby Interactive Experience” as a featured project to check out on-site.

No official, public timetable for specific Ruby “demo slots” is provided in the cited announcements; instead, the activation is described as an on-booth experience during NAB, implying availability during show floor hours, subject to booth capacity and staffing.

What’s next after NAB 2026 for real-time facial animation and AI avatars

The Ruby demo messaging points to a near-term trajectory where interactive characters converge on a unified production-and-runtime model: a single character system must support authored narrative performance, live interaction, and continuity of expression across “scripted or live” contexts.

From a tooling perspective, the broader ecosystem trend is toward increasingly accessible audio-to-face and real-time facial animation technologies, including vendors explicitly positioning real-time facial animation as a requirement for conversational 3D avatars and digital humans.

JALI’s own published tech direction (as of its current tech specs and product messaging) suggests incremental expansion along three axes that align with this trend: deeper engine integration (current Unreal Engine plug-in support), expanded language models (current list of unique language models), and scalable pipeline automation (batching/scripting/CLI integration).

Finally, the research framing behind animator-centric automation emphasises that future performance systems must balance scale with editability and must incorporate paralingual and emotional layers without compromising articulation—precisely the “believable conversation” constraint that interactive booth demos like Ruby make visible to a broad industry audience.

Frequently Asked Questions (FAQs)

- What exactly was JALI showing at NAB Show 2026?

JALI’s public announcement states it is showcasing real-time facial animation and automated lip sync, demonstrated through an interactive AI character performance (Ruby) at the HP booth during NAB Show 2026. - Who created the interactive AI character Ruby?

Ruby is described as a transmedia character developed by Immersive Enterprise Laboratories (IEL). - What is “The Ruby Interactive Experience”?

It is described as a live, AI-driven interactive meet-and-greet at the HP booth where attendees can engage with Ruby while JALI powers her facial animation, lip sync, and idle/performance behaviour. - Is the Ruby demo described as scripted content or live interaction?

The materials describe both modes: they frame Ruby as supporting “scripted or live” performance, and explicitly position the NAB activation as a live interactive experience. - Does the demo involve large language models (LLMs) or RAG?

The press materials explicitly reference backend LLMs and custom RAG models as part of the overall interactive digital character system, while emphasising the importance of robust animation and rigs for believable outcomes. - How does JALI claim to differ from “black box” lip sync tools?

JALI’s research and product messaging repeatedly describe an animator-centric approach where automation generates controllable, editable motion (sparse curves; refinement-friendly output) rather than an output that is difficult to art-direct. - What languages does JALI support for multilingual facial animation?

JALI’s published tech specs list unique language models including English, French, Russian, Polish, Portuguese (Brazil), Japanese, Mandarin, German, Italian, Spanish, Korean, and Dutch. - Where was the JALI / Ruby demo located on the show floor?

The demo is consistently described as being at the HP booth, cited as booth #N2561, at the Las Vegas Convention Center during NAB Show 2026. - What are the official NAB Show 2026 show floor dates and hours?

Official NAB pages list the show as April 18–22, 2026, with exhibits/show floor April 19–22 and show floor hours of 10:00–18:00 (Sun), 09:00–18:00 (Mon–Tue), and 09:00–14:00 (Wed). - Was a precise demo timetable for Ruby published in the announcements?

The cited announcements describe the activation as an on-booth experience but do not provide specific session time slots; the most reliable public timing frame is the official show floor hours.

Conclusion

The NAB Show 2026 HP booth activation described in April 2026 press materials centres on a clear proposition: interactive AI characters become compelling when the conversational stack (LLMs/RAG/TTS) is paired with a production-grade, controllable facial performance system that preserves character continuity during unscripted interaction.

Across JALI’s published technical foundations and current product specifications, the demo’s emphasis on real-time, multilingual, animator-centric performance aligns with a broader industry shift toward integrated, engine-based, continuously iterative character pipelines—precisely the kind of workflow trade shows like NAB surface to a wide professional audience.

Sources and Citations

- JALI Research — Official site (platform support, tools, tech overview)

https://jali.co - SIGGRAPH 2016 paper — “JALI: An Animator-Centric Viseme Model for Expressive Lip Synchronization”

https://dl.acm.org/doi/10.1145/2947688.2947692 - SIGGRAPH 2020 talk — “JALI-Driven Expressive Facial Animation and Multilingual Speech in Cyberpunk 2077”

https://www.youtube.com/watch?v=0b3c1JALI (SIGGRAPH official talk listing / archived session reference) - Unreal Engine (Epic Games) — Virtual production and real-time animation tools overview

https://www.unrealengine.com/en-US/solutions/virtual-production - NVIDIA — Audio2Face and digital human animation technology

https://www.nvidia.com/en-us/omniverse/apps/audio2face/ - NAB Show (official) — About NAB Show (venue, dates, general info)

https://www.nabshow.com/about/ - NAB Show (official) — Plan Your Show (floor hours and event logistics)

https://www.nabshow.com/plan-your-show/ - 80 Level — Industry coverage of real-time animation and tech demos (JALI/NAB-related reporting hub)

https://80.lv - Animation Magazine — Industry coverage of animation tech and NAB-related showcases

https://www.animationmagazine.net - Creative COW — NAB exhibition and production technology reporting

https://www.creativecow.net

Recommended

- The Importance of Virtual Avatars, Influencers, and Mascots for Brands: Lessons from Duolingo

- Amazon Introduces New DDoS Protection for Devs Using AWS GameLift Servers (How It Works + Setup)

- Smooth Obstacle Avoidance System Set Up in Unreal Engine: RVO vs Detour Crowd, NavMesh Settings, and Best Practices

- Duskbloods is still coming this year, exclusive to Nintendo Switch 2 — 2026 release update + everything we know about The Duskbloods

- How do I export a camera from Blender to another software?

- How to Make Anything a LEGO in Blender with Geometry Nodes: A Step-by-Step Guide

- What Is Camera Focal Length in Blender?

- The Sims 4 Will Open an Official Marketplace to Let Content Creators Sell Mods: Release Date, “Moola” Currency, and Maker Program Explained

- MoCap Online: Top Motion Capture Resources, Tools, and Animation Packs for Game Developers and Animators

- When Is PS6 Coming Out, How Much Will the PS6 Cost, and Will PS6 Be Backwards Compatible? (2026 Update)