AI in game development: what it means in 2026

“AI in game development” has evolved to mean more than traditional NPC A.I. logic – in 2026 it encompasses machine learning and generative AI tools integrated into game creation workflows. Developers are using AI to generate content, assist coding, automate testing, and even drive dynamic player experiences. Over 4,000 games released in 2025 on Steam were tagged for AI content, and experts predict roughly one in three new games in 2026 will disclose some use of AI.

This ranges from using AI offline to speed up asset production to deploying AI live in-game for things like procedural dialogue. While classical game AI (like enemy pathfinding) remains, the term now often refers to generative AI assistance throughout development – a shift accelerated by breakthroughs in language models and image generation. In short, AI has become a co-pilot for developers, promising faster workflows and new possibilities, but also introducing creative and ethical challenges that the industry is grappling with in 2026.

Wins of AI in game development: where it speeds up production

AI’s big “wins” in game development come from automation of labor-intensive tasks and sparking creativity. Artists and designers can use generative AI to quickly produce concept art, textures, and 3D models, cutting down what used to take days into hours. For example, Ubisoft’s R&D has shown that an AI can clean up a raw motion-capture animation in minutes instead of the four hours a human animator would need. AI code assistants handle routine programming chores – generating boilerplate code, suggesting fixes – allowing engineers to focus on harder logic. In narrative design, AI can draft hundreds of NPC one-liners (“barks”) in a fraction of the time, as seen with Ghostwriter, Ubisoft’s in-house tool that generates first-draft NPC dialogue to save writers time.

Importantly, these tools are positioned as aids, not replacements: Ghostwriter frees up writers for higher-level storytelling while it cranks out variations of incidental lines. AI-driven testing bots are another production win – studios use ML agents to play through levels autonomously, catching bugs or balance issues faster than an army of human QA testers. Ubisoft’s La Forge division notes that using AI for monotonous tasks like build testing, bug prediction, and balance tuning opens developers’ time for creative work. In summary, AI speeds up production wherever repetitive or data-heavy work exists: it can crank out volume and iterate rapidly, augmenting human creators by handling the grind work and offering an idea machine to prototype art, code or design options instantly.

Losses of AI in game development: where quality and trust break down

Not every application of AI has been a victory – there have been notable “losses” where relying on AI led to quality issues and eroded trust. Consistency and quality control are major concerns. Generative AI output can be hit-or-miss; it might produce 100 character portraits, but with inconsistent styles or strange artifacts that artists must fix.

Over-reliance on AI-generated assets without proper oversight can result in a game feeling visually or tonally disjointed. Players and press have also caught instances of AI content that slipped through with mistakes – e.g. odd AI-generated art in a game’s scenes – which can harm a studio’s reputation. Another loss area is trust with the audience and creators: if players discover a game’s assets or writing were AI-made, some feel “cheated” or that the studio cut corners, unless quality is indistinguishable from human work.

High-profile missteps in 2025 underscored this: Activision faced backlash for reportedly using AI-generated art in a Call of Duty concept, fueling player criticism that the franchise was becoming a “slop factory”. Likewise, a game that used AI voice models for characters (e.g. the shooter Arc Raiders using AI-generated voices) was met with clear disapproval from gamers and even its own community. In these cases, the loss of authenticity and fear of job displacement provoked negative reactions.

Internally, developers note that AI can generate lots of content quickly, but review and editing eats up time – potentially nullifying the supposed efficiency gains. If an AI churns out buggy code or lore text that contradicts the game world, humans must intervene. This can create a false economy: initial speed followed by lengthy clean-up, which is a “loss” compared to just doing it right once. Finally, ethical and legal pitfalls count as losses.

If an AI is trained on unlicensed art or code, the studio risks copyright infringement lawsuits or takedowns – a very concrete loss. These downsides illustrate that without careful use, AI can degrade quality and trust, making it a double-edged sword. Winning back that trust often requires transparency and a human touch to ensure the AI’s contributions meet the studio’s standards.

Generative AI for game art and assets: benefits, risks, and quality control

Generative AI tools like MidJourney and DALL·E allow for the rapid creation of concept art, textures, UI elements, and 3D blueprints, accelerating the creative process for studios of all sizes. While these tools help automate minor assets and provide quick variations, they carry risks regarding originality and copyright. Legal protections for AI-generated works remain uncertain, as the U.S. Copyright Office requires meaningful human authorship for registration. Additionally, maintaining style consistency across assets is difficult, leading studios to implement “human-in-the-loop” strategies with strict style guides. Ethical concerns also exist regarding the use of unlicensed training data. Ultimately, AI offers speed but requires human oversight and quality control to ensure assets meet project standards and legal requirements.

AI for game programming: code generation, debugging, and refactoring

AI assistants like GitHub Copilot and GPT-4 helpers are used to generate snippets, scripts, and simple systems from natural language prompts, aiding in prototyping and handling boilerplate code. These tools also assist with debugging and refactoring, suggesting optimizations and explaining errors, which helps developers learn best practices. However, AI can “hallucinate” incorrect code, necessitating thorough human review and testing. The programmer’s role is shifting toward an editor and architect who must guide the AI and verify outputs to avoid legal issues with licensed code. When managed through code reviews and QA, AI handles repetitive “grunt work,” allowing developers to focus on complex systems and game polish.

AI for NPC dialogue and narrative design: opportunities and pitfalls

Generative AI and Large Language Models (LLMs) offer the potential for dynamic NPC conversations and vast amounts of ambient dialogue. Tools like Ubisoft’s Ghostwriter assist by generating drafts for minor NPC “barks,” allowing writers to focus on the main story. Advanced prototypes even allow for unscripted, fluid interactions with characters. Despite this, unrestrained AI can break narrative tone or produce lore-inconsistent responses. Challenges include the need for heavy guardrails to prevent toxic or off-script content, as well as correcting biases found in training data. Current best practices involve a hybrid approach where writers curate and approve AI-generated content to ensure narrative authenticity and immersion while limiting AI to side content to avoid unpredictability in major story beats.

AI for procedural level design and content generation: what actually works

Procedural generation is evolving from rule-based algorithms to machine learning models that produce nuanced game levels and layouts. In 2026, the most effective approach is AI-assisted design, where generative models like GANs provide designers with numerous layout options to overcome creative blocks.

AI excels at creating modular content, such as puzzle rooms or terrain chunks, which humans then assemble and refine. While AI can populate scenery and distribute loot based on high-level constraints, fully automated generation often lacks the intentional pacing and “fun factor” of human design. Additionally, AI is used for procedural content balancing and generating minor narrative elements like fetch quests. Ultimately, successful levels result from a collaboration where AI handles data-heavy detailing while humans ensure coherence and quality.

AI-powered game testing and QA: automated playtesting and bug detection

AI is transforming Quality Assurance through automated playtesting and bug detection. Reinforcement learning agents and scripted bots can traverse levels faster than humans to stress-test physics, collision, and geometry. Tools like those from modl.ai allow for parallel testing across many instances, while systems like Ubisoft’s “Commit Assistant” use machine learning to identify potential bugs in code as they are written. Despite these advancements, AI struggles with high-level logic, narrative triggers, and subjective issues like UI clarity. Consequently, the industry uses a hybrid model: AI manages repetitive, brute-force tasks and regression testing, while human testers focus on qualitative feedback and complex edge cases. This approach identifies issues earlier and allows for greater overall polish.

AI balance testing and economy tuning with simulated players

Game balancing and economy tuning are being revolutionized by AI agents that simulate millions of play sessions at superhuman speeds. These agents help designers identify overpowered strategies, underutilized items, and economic issues like currency inflation much faster than traditional manual testing. AI tools can treat balancing as an optimization problem, adjusting enemy stats or resource rates to meet specific design goals. Designers can employ different AI profiles—such as “optimized” or “casual”—to ensure the game remains balanced for various player types. However, human oversight is essential to ensure that balance changes feel “fair” and “fun.” AI identifies the data-driven problems, but humans make the final creative decisions on how to implement fixes without compromising the player experience.

AI critique and feedback for game developers: prompts that produce useful notes

Developers are utilizing Large Language Models (LLMs) like ChatGPT as virtual design advisors to critique game premises, story synopses, and mechanics. By providing the AI with a pitch, designers can receive breakdowns of strengths and weaknesses, including suggestions to clarify hero growth or specify target audiences.

AI can also role-play as a playtester to predict potential player frustrations or rewards within a described combat system, or perform pseudo-UX reviews on tutorial text. Effective results require “prompt engineering,” where specific, open-ended questions lead to more pointed advice on elements like boss fight patterns. While AI draws on vast patterns of media critique, it lacks genuine emotional intuition and can sometimes hallucinate irrelevant concerns. Consequently, developers treat AI feedback as a secondary brainstorming tool, using human judgment to decide which logical insights to implement.

AI for analyzing player feedback: sentiment analysis, intent recognition, and trend detection

AI is revolutionizing the way developers process vast amounts of unstructured player feedback from reviews, social media, and support tickets. Specialized Natural Language Processing (NLP) models, such as those from PlayerXP and Affogata, can accurately interpret “gamer lingo” and sarcasm to perform sentiment analysis, identifying shifts in player morale in real-time. Beyond sentiment, AI uses intent recognition and topic clustering to group feedback into specific categories, such as server lag or balance requests, allowing teams to prioritize issues quickly.

This speed enables developers to detect emerging trends and bugs before they escalate into major problems. Some studios even correlate these sentiment changes with live-ops events to quantitatively gauge player reception. While human community teams still provide nuance, AI acts as an vigilant analyst that distills the collective player voice into actionable insights, helping to protect a game’s reputation and inform development priorities.

How to build an AI-assisted feedback loop from playtests to patches

Closing the loop between playtesting and patching is more efficient when using AI to handle data. This process involves several key stages:

- Instrument & Gather Data: Games are instrumented to collect telemetry and player feedback. AI models, such as NLP, categorize large volumes of surveys and perform sentiment analysis to gauge satisfaction.

- Analyze & Identify Issues with AI: AI rapidly identifies patterns, such as difficulty spikes or bugs, by cross-referencing telemetry with player sentiment. Some studios use reinforcement learning agents to predict balance issues before a patch is released.

- Prioritize and Plan Fixes: Developers use AI-generated summaries and heatmaps of issues to objectively prioritize tasks, though humans still validate these findings for feasibility.

- Implement Patch & Validate with AI: After a patch is released, AI monitors telemetry and sentiment to confirm the fix worked and ensures no new issues were introduced.

- Continuous Loop: This cycle repeats with every update, allowing for faster iteration and more polished games.

An example of this is a mobile team using AI to parse app store reviews to identify and fix crashes within hours rather than days. Ultimately, AI allows for a real-time conversation between developers, player data, and game performance.

Steam AI Generated Content Disclosure requirements: pre-generated vs live-generated AI content

Platforms like Steam require developers to disclose the use of AI-generated material. Valve distinguishes between two specific types:

- Pre-generated AI content: These are static assets, such as art, textures, music, or dialogue, created using AI during development and included in the final game. Disclosure is required for any player-facing content, though internal uses like code optimization do not need to be tagged.

- Live-generated AI content: This refers to content created by AI in real-time during gameplay, such as dynamic quests or NPC dialogue. This requires an extra level of disclosure to inform players that the content is dynamic.

These rules ensure transparency, help consumers make informed decisions, and address legal concerns regarding rights to AI-generated assets. While Valve’s initial stance was stricter, the current policy focuses on content consumed by players rather than the tools used for development brainstorming.

Live-generated content is particularly notable because it can result in unique experiences for each player or raise concerns regarding inappropriate output. On Steam, developers must check the appropriate boxes for pre-generated or live-generated AI and provide explanations. This transparency helps comply with platform requirements and builds trust with the audience.

How players react to AI-made game content: disclosure, backlash, and “AI-free” marketing

Player reactions to AI-created game content are mixed, with a significant vocal portion of the community remaining wary or negative. This has led to strong reactions and new marketing strategies. Initial concerns centered on whether AI would replace human artists or result in hollow storytelling. For instance, Larian Studios faced such intense backlash over experimenting with generative AI that the CEO had to clarify that no jobs were cut and AI was only used for minor, exploratory tasks.

Backlash has also manifested as review bombing or social media outcries. In 2025, the game Clair Obscur: Expedition 33 had an award rescinded because AI was used in early development, despite having no AI assets in the final game. Such incidents have sparked debates over whether games using AI for ideation should compete with fully human-made titles.

This climate has birthed “AI-free” marketing, where indie developers highlight that their games are 100% human-made to attract skeptical players. This approach markets artisanal effort and ethical sourcing, similar to “organic” food labels. While some players are neutral or positive if AI improves the game, “hyper-enthusiasts” often view AI as a cost-cutting measure leading to layoffs or “AI-generated slop.” This pejorative term describes low-quality, lazy content, posing a reputational risk to developers.

In 2026, transparency is a double-edged sword; it is appreciated but can trigger backlash. Developers must carefully communicate their use of AI, as an “AI-free” status currently serves as a major quality marker in the core gaming community.

Epic Games Store vs Steam on AI labels and disclosure: what developers should know

Steam and the Epic Games Store (EGS) have contrasting policies regarding AI content, which impacts how developers release their games.

- Steam: Requires developers to disclose AI-generated content experienced by players through an “AI Content” questionnaire. It distinguishes between pre-made and live-generated content and displays a notice on the store page. Internal tools for optimization or coding do not require disclosure. Honesty is mandatory, as failure to disclose can lead to the game being hidden or removed.

- Epic Games Store: Maintains a more laissez-faire approach. CEO Tim Sweeney argues that AI labels are unnecessary because AI will eventually be involved in most production. As of 2026, EGS does not require AI declarations or display AI-generated content tags.

Despite these differences, developers must ensure they have proper rights to all AI-created assets on both platforms to avoid IP violations and potential takedowns. Steam’s transparency helps players make informed decisions, whereas Epic’s stance avoids stigmatizing the technology. Developers must tailor their communication for each platform: fulfilling Steam’s formal requirements while deciding how to self-regulate and communicate their AI usage on Epic.

Human-in-the-loop workflow for AI in game development: approvals, style guides, and QA gates

The most effective way to integrate AI in game development is through a “human-in-the-loop” workflow, where human expertise guides and checks AI output at every stage to maintain creative control and quality.

- Establish Clear Guidelines: Before generation, teams set style guides and constraints (such as color palettes or tone guides) to ensure AI output matches the project’s vision.

- AI Generation with Oversight: Humans trigger the AI iteratively. For example, a designer may prompt an AI tool for map layouts but oversees the process to prevent the results from veering off-target.

- Review and Approval Checkpoints: No AI output enters the game without human review. Assets and code are treated as drafts to be checked by artists, programmers, and writers. This gate catches issues like artifacts, repetition, or bugs.

- Iteration with Human Feedback: Humans provide feedback to tune the AI tools. Developers accept or reject suggestions, which teaches the model to produce better results over time.

- Final QA Gate: AI content is subjected to standard QA testing. Narrative QA checks for continuity, while technical artists ensure assets do not cause performance issues.

This workflow leverages AI’s speed and volume while using human judgment to maintain artistic integrity. It prevents common pitfalls like incoherence or style drift, ensuring that the final product remains a human-crafted experience where the AI functions only as a sophisticated tool.rol.

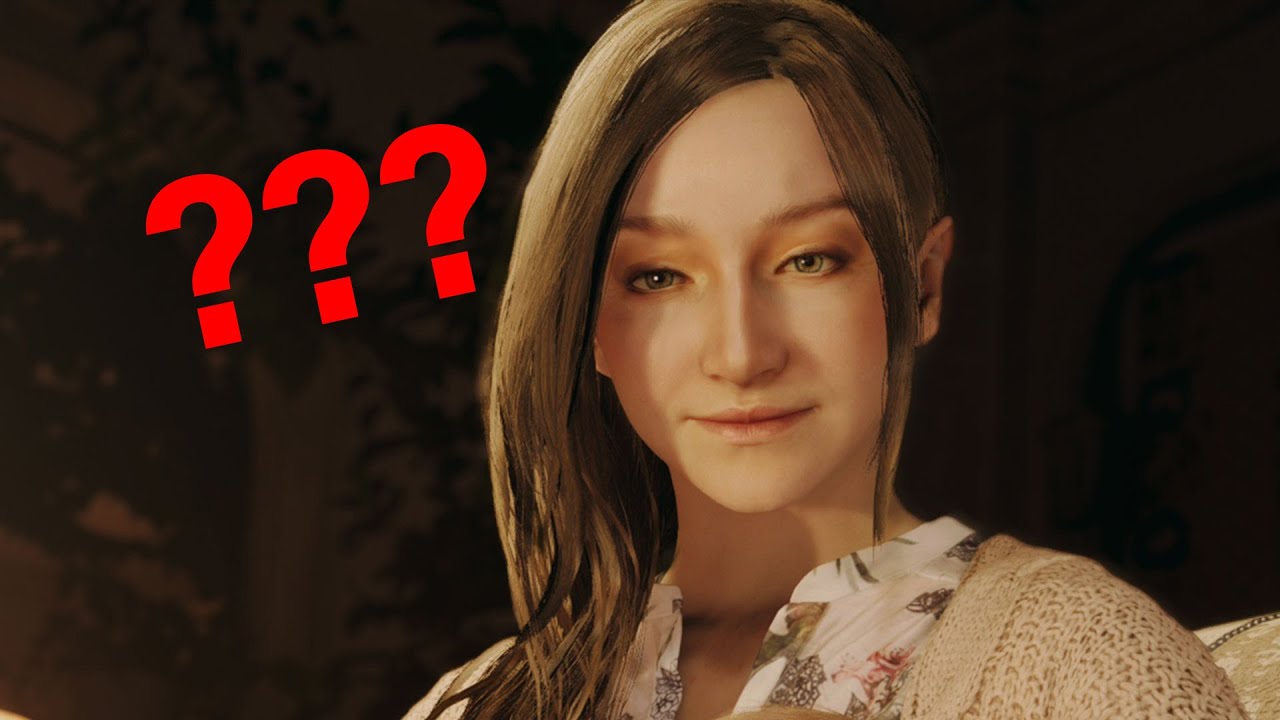

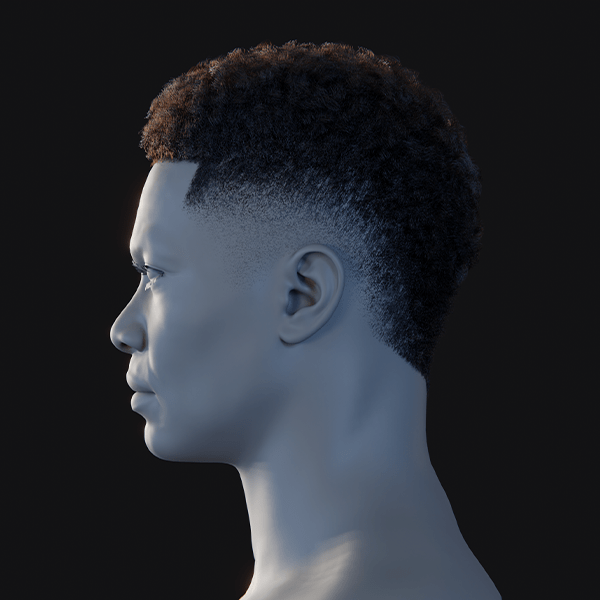

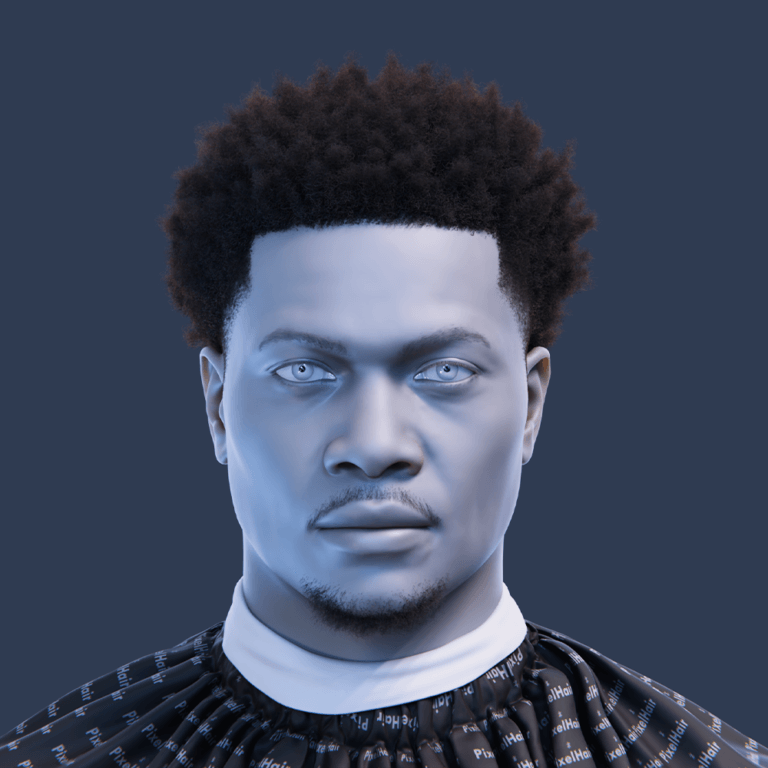

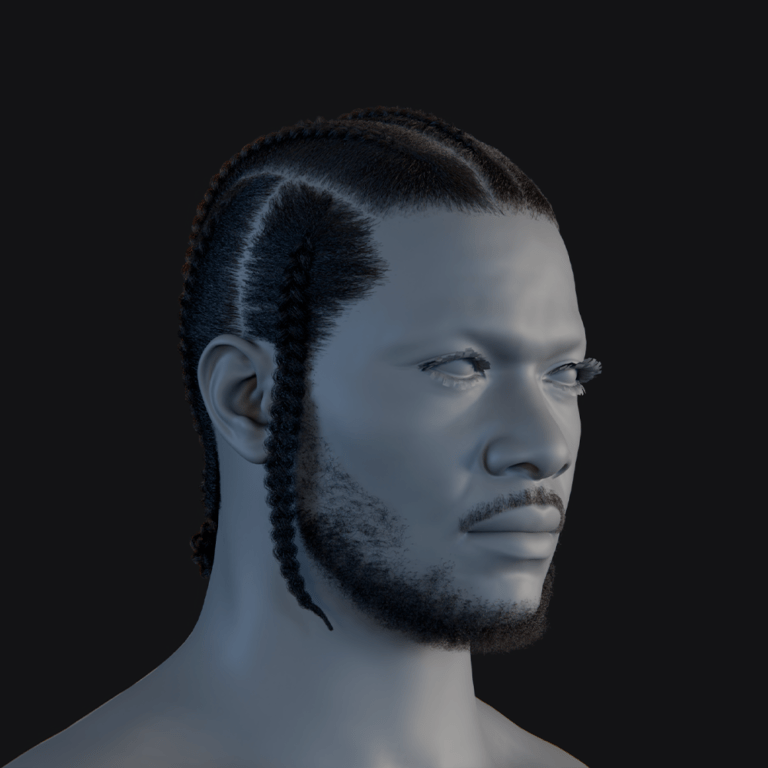

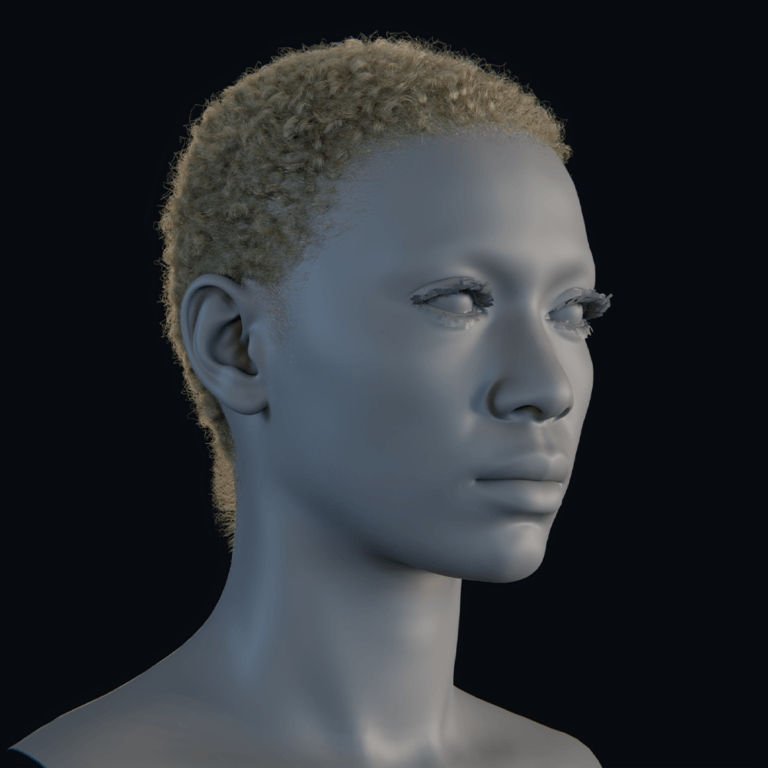

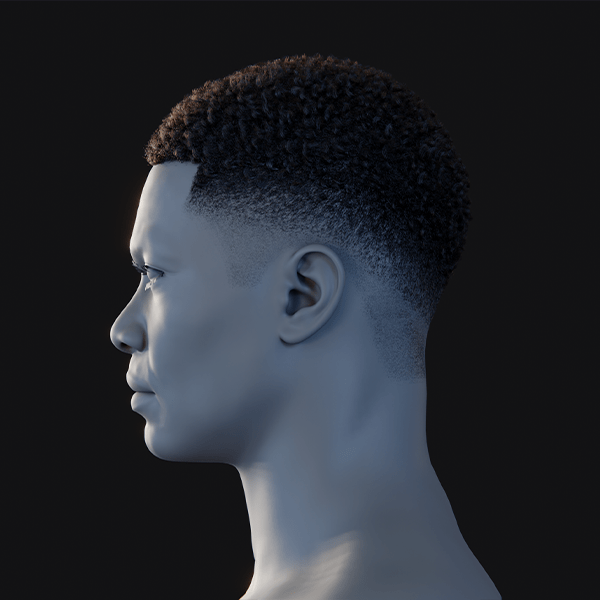

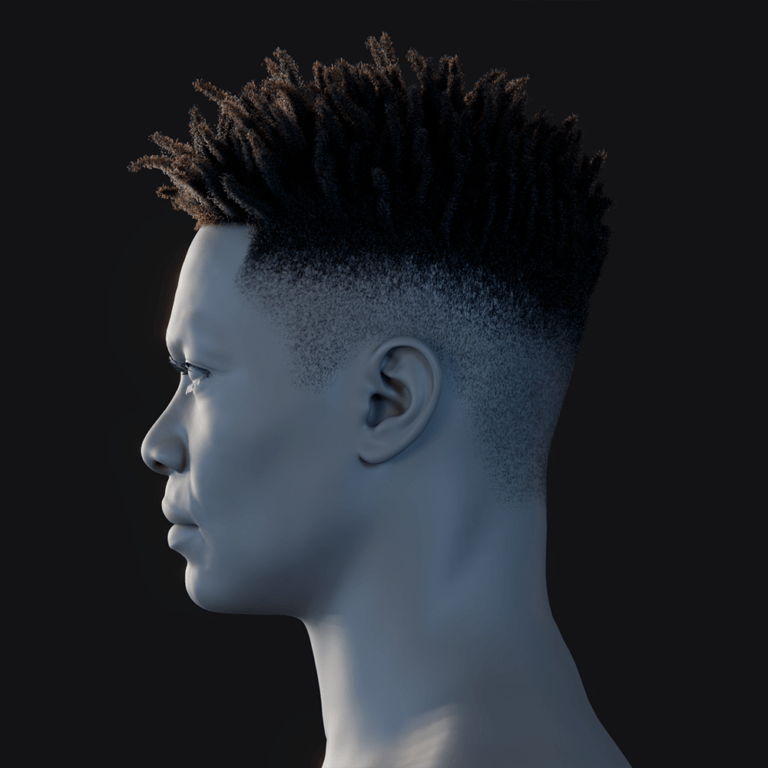

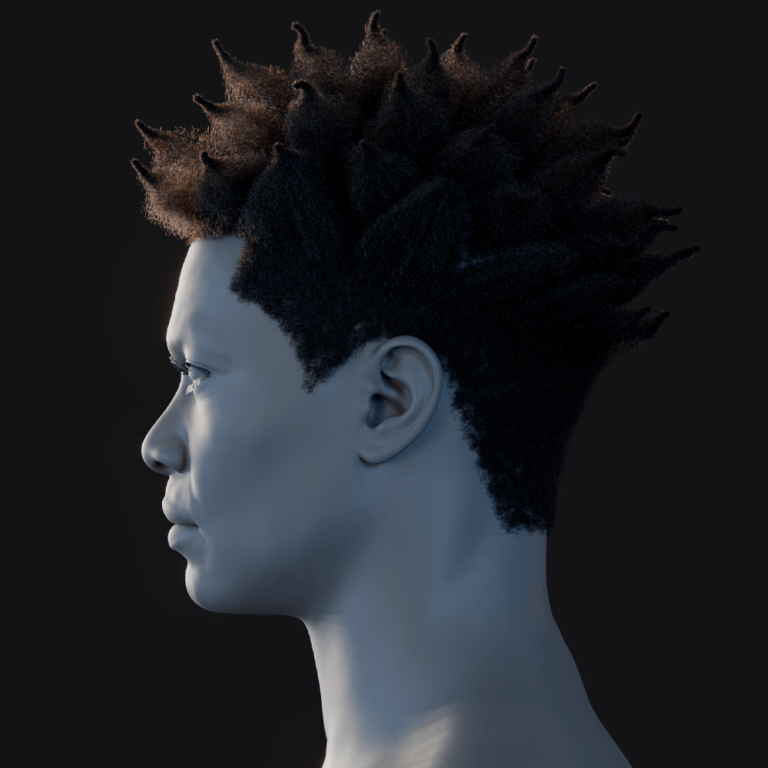

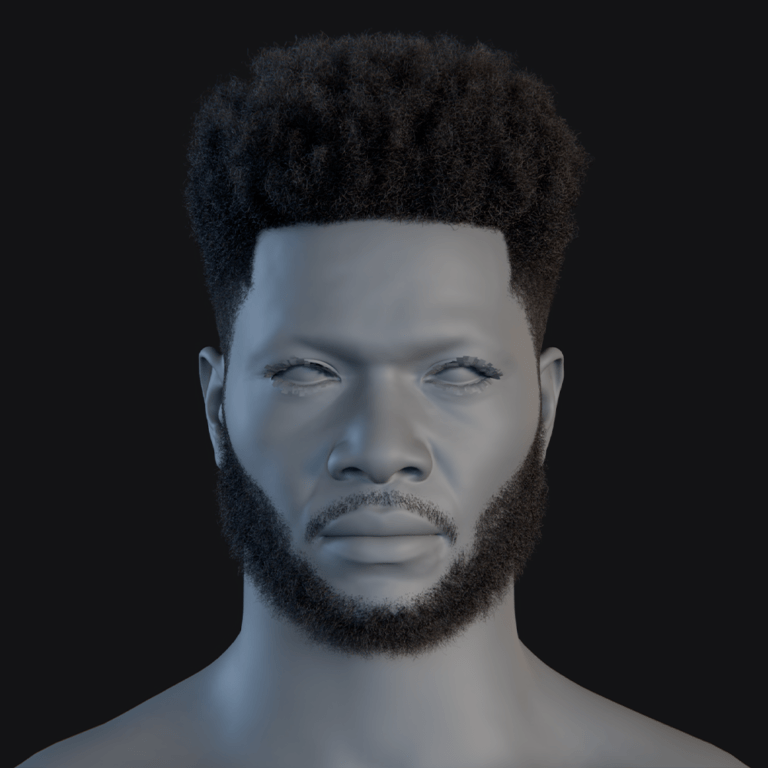

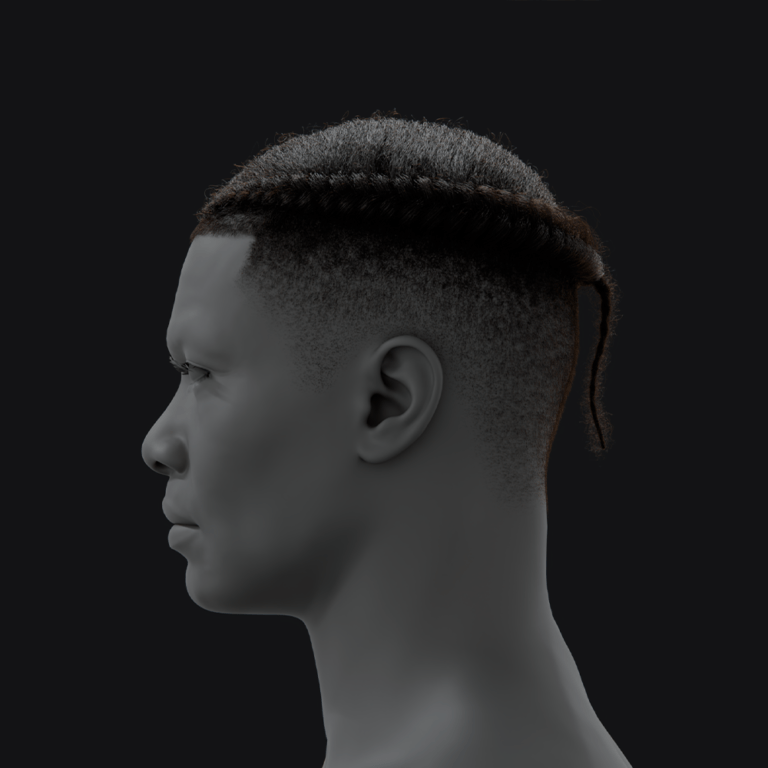

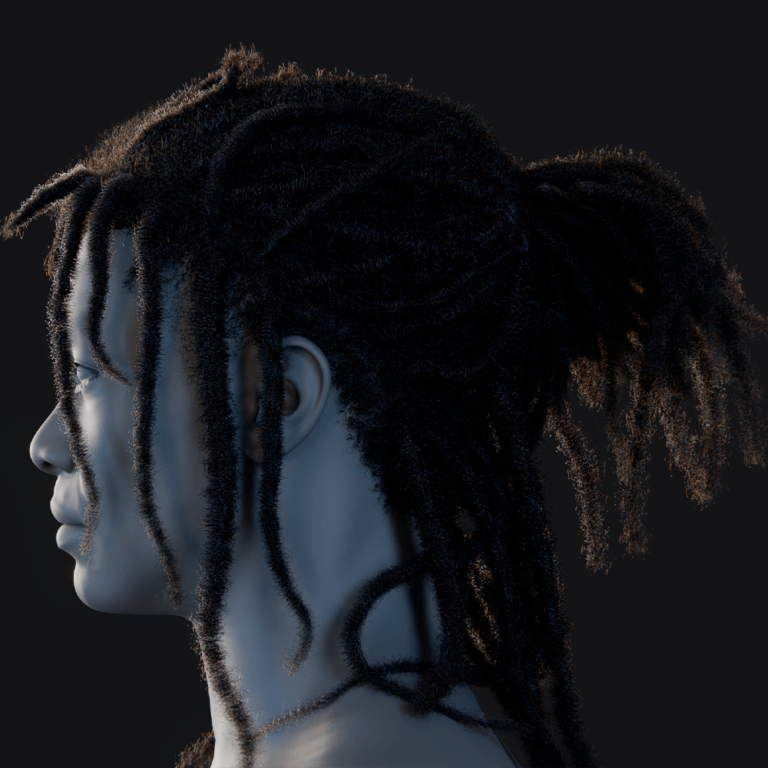

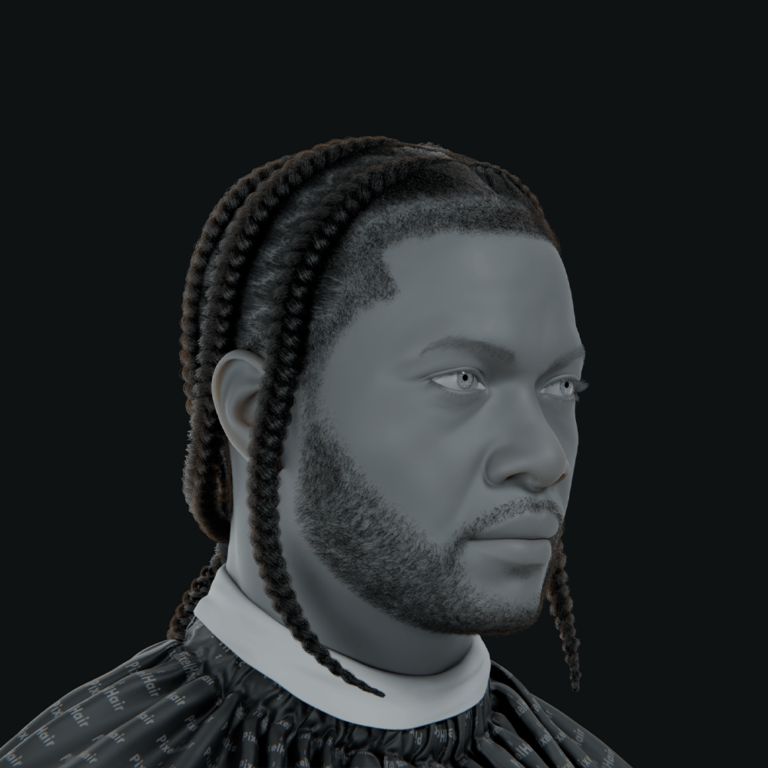

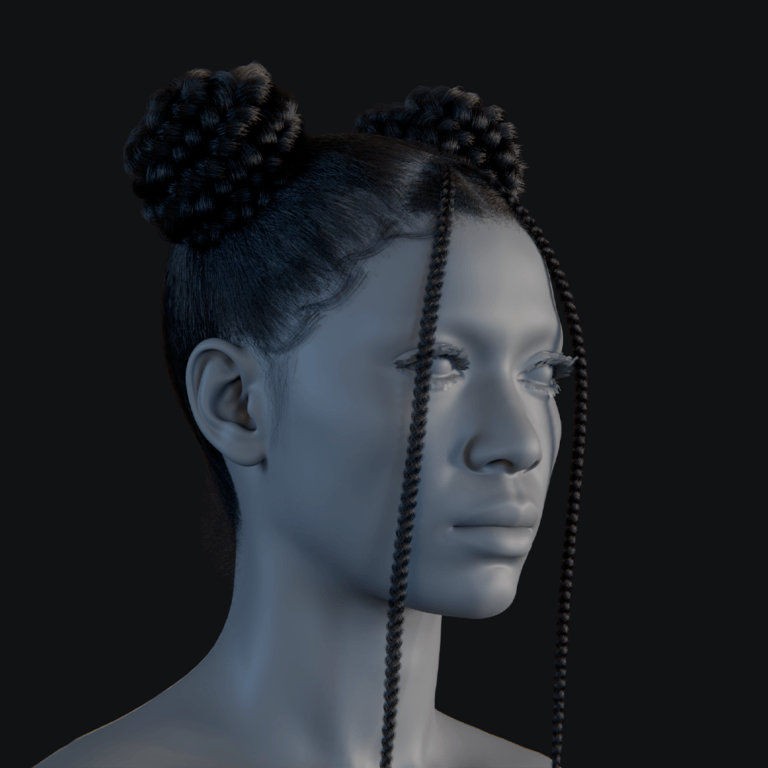

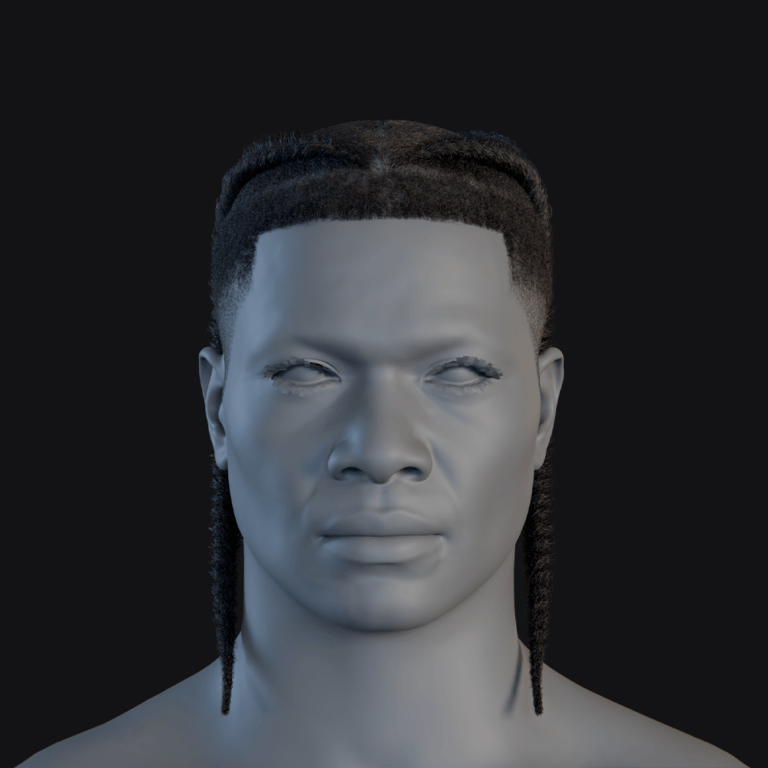

PixelHair Blender hair assets for game-ready characters: when non-AI pipelines win

PixelHair offers handcrafted 3D hair assets for Blender and Unreal Engine (MetaHuman) that provide significant time savings over traditional grooming, which can take days. While AI hair generation remains experimental and lacks the ability to produce simulation-ready 3D hair, PixelHair delivers production-ready assets with proper strand dynamics and materials.

These assets provide consistency and quality control since they are curated by a single developer (Yelzkizi), whereas AI outputs can be unpredictable. They are customizable, allowing artists to adjust density or materials. The collection is particularly useful for creating diverse and inclusive styles, such as 4C afros and intricate braids, which are difficult to create from scratch. PixelHair is also evolving to incorporate Blender’s Geometry Nodes, ensuring better physics and parametric adjustments. Unlike raw AI output, these assets include proper LODs and optimizations, offering predictability and professional finesse that current AI cannot replicate.

The View Keeper Blender camera management add-on: faster cinematic shots and trailer renders

The View Keeper is a Blender add-on that streamlines cinematic camerawork by providing a central interface to save and switch between multiple camera setups. It stores all parameters, including focal length, depth of field, and resolution, allowing animators to jump between shots with one click.

The tool supports batch rendering, enabling multiple camera views to be rendered sequentially. It ensures consistency by tying specific render settings to each saved view, reducing errors during the production of trailers or cutscenes. For game developers, it functions like a non-linear editor, making it easier to refine shots and organize workspaces without cluttering the scene with numerous camera objects. By automating repetitive tasks and allowing for professional multi-cam control, the add-on reduces technical friction and enables creators to focus on framing and motion.

Checklist for using AI in game development without losing creative control

To use AI responsibly while maintaining creative oversight, developers should follow these guidelines:

- Define the Creative Vision Upfront: Establish style guides and design pillars first so AI content can be measured against established standards.

- Choose the Right Tasks for AI: Use AI for assistive tasks like concept variants or code optimization, but keep human touch for storytelling and emotional nuance.

- Keep Humans in the Loop: Treat AI outputs as first drafts that must be reviewed, edited, or discarded by human professionals.

- Iterate with Prompts and Feedback: Refine prompts and provide feedback to steer the AI toward the desired quality and tone.

- Maintain Creative Oversight on Key Elements: Hand-craft core elements like main characters and story arcs to preserve the game’s identity.

- Set Ethical and Legal Boundaries: Use ethically sourced models to avoid licensing issues and support the creative community.

- Test AI Integrations Rigorously: Extensively playtest AI-driven elements to ensure they meet quality bars and do not break immersion or lore.

- Preserve Narrative and Artistic Coherence: Ensure all AI-assisted content is unified by a single editor or artist to maintain a consistent voice.

- Document AI Usage for Transparency: Keep logs of AI usage for internal tracking and to provide honest disclosure to players and platforms.

- Embrace Human Creativity as the Final Word: Treat AI as a tool, not a replacement; human judgment should always override AI suggestions to ensure the game remains fun and purposeful.ame that is polished and rich, with AI’s fingerprints effectively invisible to the player, and your creative vision fully intact.

Frequently Asked Questions (FAQs)

- Will AI replace game developers and lead to job losses in the industry?

AI automates repetitive tasks but is not a wholesale replacement for creative talent. While roles may evolve and some entry-level tasks may decrease, new roles are emerging. Most believe human creativity remains irreplaceable, and AI is best used to boost productivity rather than as a one-to-one substitute. - Can using AI in development infringe on copyrights or intellectual property rights?

Yes, if the models are trained on copyrighted material or produce outputs too similar to existing works. Furthermore, pure AI-generated content is generally not copyrightable in the US. To mitigate risk, developers should use ethically trained models, modify outputs significantly, and document origins. - Who owns the content created by AI tools?

This is a gray area. Pure AI outputs often lack human authorship and may be public domain. However, platforms often grant usage rights to users. Ownership is more secure when humans heavily curate or edit the output, and developers should clarify rights in contracts with freelancers. - Is it ethical to use AI-generated art and writing in games?

Ethics are debated regarding training data and the potential devaluation of human labor. Using AI ethically requires transparency with players, using ethically sourced models, and ensuring it empowers teams rather than exploiting them or their IP. - How do game studios ensure AI-generated content is free of bias or inappropriate material?

Studios use tool selection with safety layers, fine-tune models on curated data, and conduct rigorous human reviews. “Red team” exercises and diversity in the dev team help catch and plug holes in AI behavior to prevent reputational harm. - Should developers disclose to players if a game uses AI-generated content?

Disclosure is increasingly expected and sometimes required, such as on Steam. While some worry about backlash, transparency builds trust. It is recommended to lean toward at least basic disclosure to avoid PR issues later. - Do players actually care if part of a game was made with AI?

Reactions are mixed. Hardcore communities may be vocal against “AI slop,” associating it with laziness. However, a broader audience cares more about the final quality and fun factor. Players are generally more sensitive to AI in creative aspects like art and writing than in technical backend tasks. - How can AI be used without sacrificing a game’s creativity or originality?

AI should be used as a creative amplifier, not an autopilot. Strategies include using AI for exploration while humans make final decisions, treating AI output as raw material to be refined, and maintaining hand-crafted anchors for the game’s identity. - Will AI tools make it easier for indie developers to create games?

Yes, AI acts as a force multiplier and fills skill gaps in art, coding, and music. This democratizes development, though it also increases competition. Success for indies still depends on design sense and the ability to knit assets into a fun experience. - Are there regulations or platform rules about using AI in games?

Yes. Steam requires disclosure, while others like Epic are more lenient. Governments are also acting; the EU AI Act introduces transparency requirements. Developers must also navigate data privacy laws (GDPR) and potential scrutiny from rating boards (ESRB/PEGI).

Conclusion

By 2026, AI’s role in game development is both transformative and nuanced. While it has enabled production wins and creative partnerships for teams of all sizes, it has also presented pitfalls regarding quality, trust, and legalities. The path forward is a human-centric approach where developers keep “humans in the loop” to maintain a strong creative vision.

Examples like Ubisoft’s Ghostwriter, PixelHair, and The View Keeper illustrate that specialized or traditional tools can sometimes outperform general AI. The industry is currently balancing innovation with transparency through evolving platform rules.

Ultimately, AI is a set of tools that should support, not dictate, the human vision. The studios that thrive will use AI to reach new creative heights while ensuring stories have heart and gameplay remains fun. Transparency and player trust are essential as AI becomes a standard part of the developer’s toolkit alongside engines and version control. Human ingenuity remains the driving force behind great games.

Sources and Citations

- Here are the sources with links:

- Ubisoft News (Ubisoft La Forge / Ghostwriter) — The Convergence of AI and Creativity: Introducing Ghostwriter

- Ubisoft News (NEO NPC) — How Ubisoft’s New Generative AI Prototype Changes the Narrative for NPCs

- AI and Games Newsletter (Tommy Thompson) — 10 Predictions for AI in Games for 2026

- Digital Watch Observatory — New Steam rules redefine when AI use must be disclosed

- Odin Law and Media (Michele Robichaux) — The Game Developer’s Guide to AI Governance

- LinkedIn Pulse (Joe Stallings) — The Ethics of Using AI in Game Development: Art, Code, and Beyond

- Techdirt — Larian Studios The Latest To Face Backlash Over Use of AI To Make Games

- GamingBible (Sam Cawley) — Clair Obscur’s Gen AI Problem Could Revolutionise Gaming, Power to the Devs

- Tom’s Hardware (Bruno Ferreira) — Epic Games’ Tim Sweeney thinks game stores shouldn’t bother with “made with AI” labels…

- Yelzkizi Blog (PixelHair) — PixelHair: High Quality Realistic 3D Hair Assets In Blender

- Yelzkizi Blog (View Keeper) — The View Keeper: Best Blender Add-on For 3D Scene Management

- AWS for Games Blog — Using generative AI to analyze game reviews from players and press

- Machinations.io — AI Balancer

- Ubisoft News (Jacquier interview / AI for testing) — How Ubisoft is Using AI to Make Its Games, and the Real World, Better

- Reddit r/gamedev — AI can be a great tool to get feedback on your game pitch and how to tailor it towards a given publisher

- PlayerXP — PlayerXP (official site)

- Helpshift Blog — How to Use AI to Turn Player Feedback into Game Improvements

- 80.lv — How Ubisoft Uses AI & Machine Learning to Build Games

- modl.ai — Testing content-heavy games with AI bots

- IGDA — GDC 2025 (IGDA page)

Recommended

- Why Does Hair Look Bad in Video Games? Exploring the Challenges of Realistic Hair Rendering

- What Are Open USD 3D Workflows? How Universal Scene Description Is Changing the Industry

- All Playable Characters in Marathon: Bungie’s New FPS – Community Reactions, Developer Insights, and Unique Visual Style

- How The View Keeper Speeds Up Animation Rendering in Blender

- How to Fix Blender Unsupported Graphics Card or Driver Error: A Step-by-Step Guide

- How to Avoid Hair Intersecting with Character Faces

- How do I create a bird’s-eye camera view in Blender?

- Rendering Multiple Camera Angles Simultaneously in Blender with The View Keeper

- Why The View Keeper Is the Best Blender Add-on for Scene Management

- How to Use Physics-Based Hair in Blender