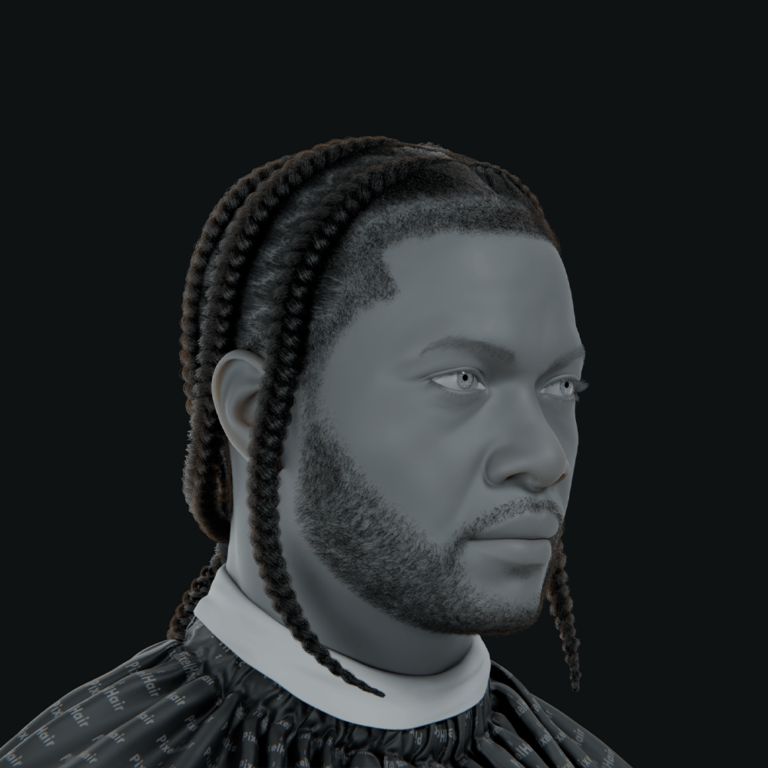

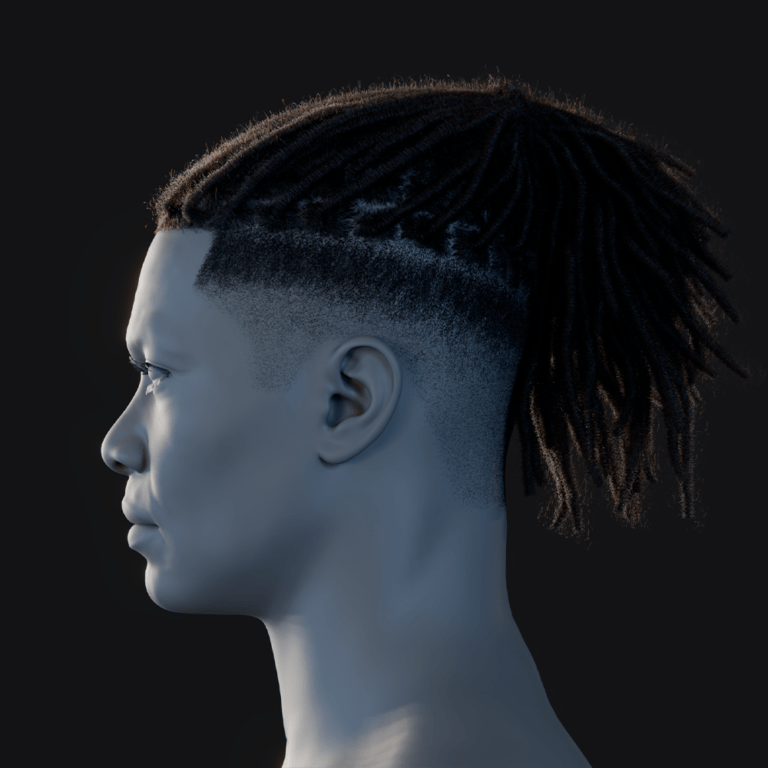

What is TANGLED? A framework for generating 3D hair strands from image input and text conditions

TANGLED is a deep learning framework that generates realistic 3D hair strands from images or text prompts using diffusion models, accommodating diverse styles and viewpoints. It employs a three-step pipeline: a MultiHair dataset for training, a latent diffusion model conditioned on lineart, and a post-processing module for braid constraints. The system transforms 2D inputs, like photos or sketches, into high-resolution 3D hair strand polylines compatible with CGI tools like Blender and Unreal Engine. Implemented in PyTorch, TANGLED is lightweight, producing complex hairstyles in minutes. It advances 3D hair modeling by enabling realistic, diverse outputs with minimal user effort.

This framework bridges user intent to detailed 3D hair models, making it valuable for animation and game development. Its diffusion-based approach ensures flexibility across various hair types and input conditions. By generating strand-level geometry, TANGLED integrates seamlessly into production pipelines. The system’s ability to handle single or multi-view inputs enhances its versatility. Overall, TANGLED represents a significant leap in automated hair generation for creative industries.

Understanding the MultiHair dataset: Limitations, diversity, and formatting for AI training

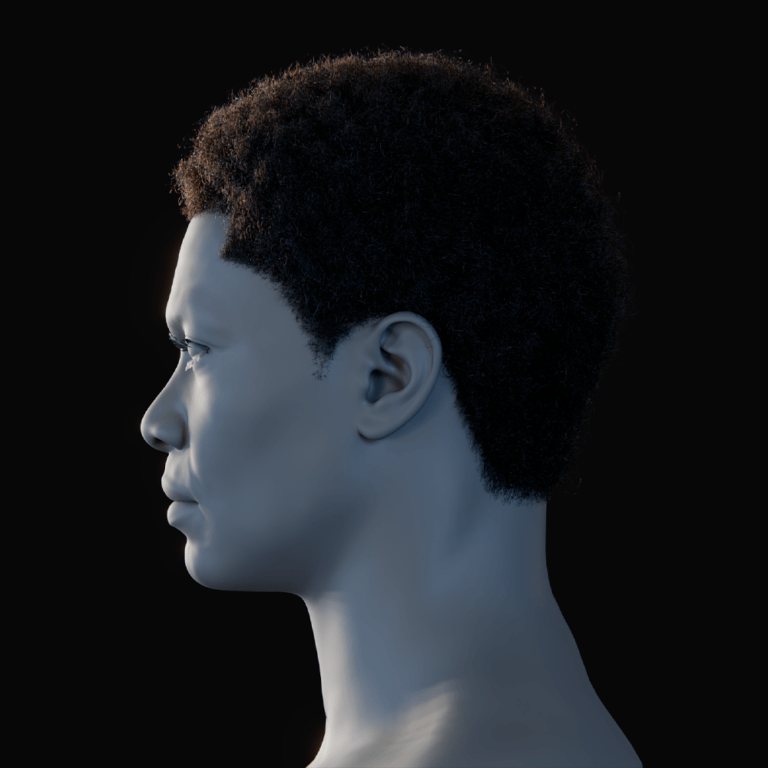

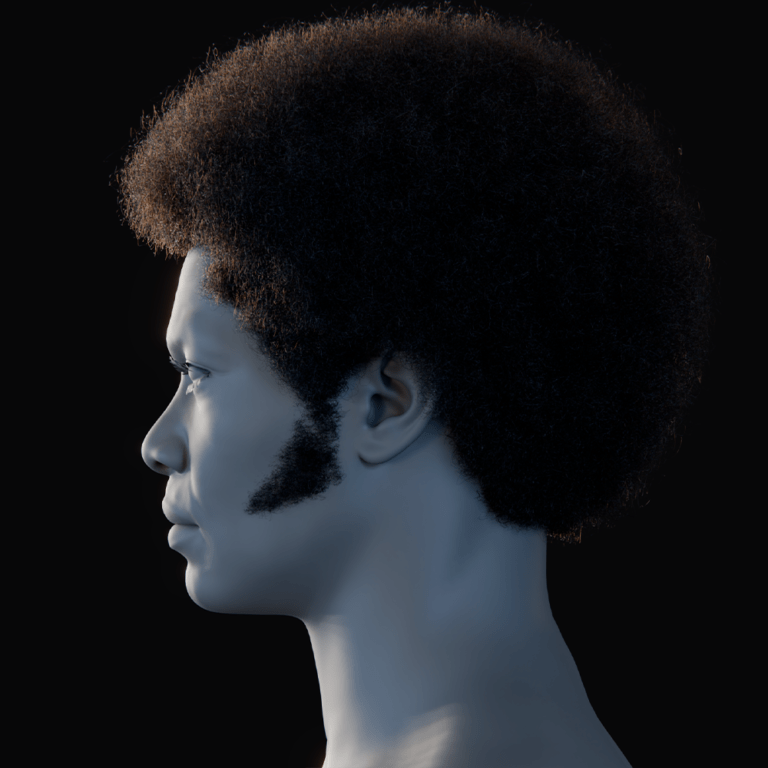

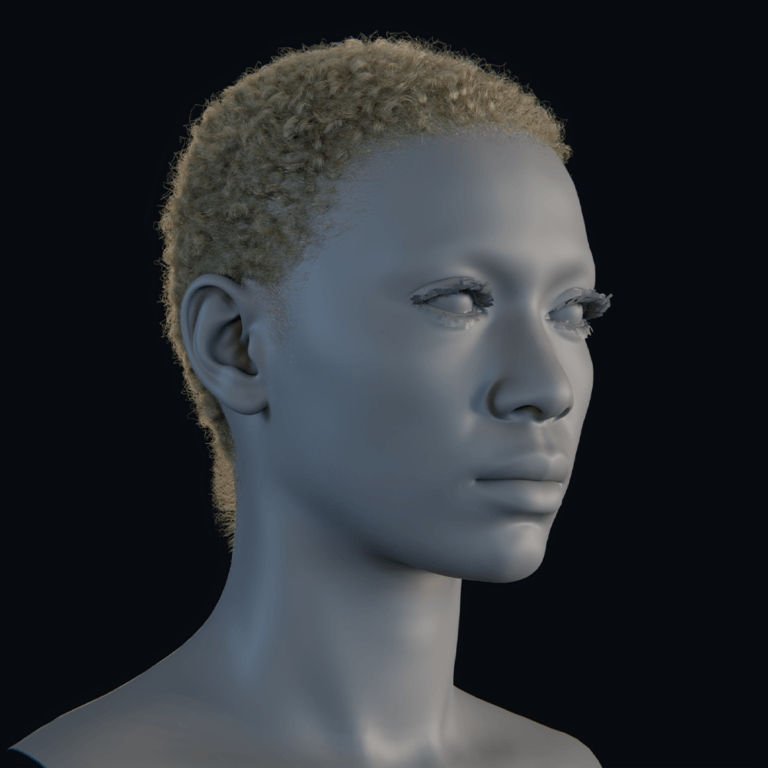

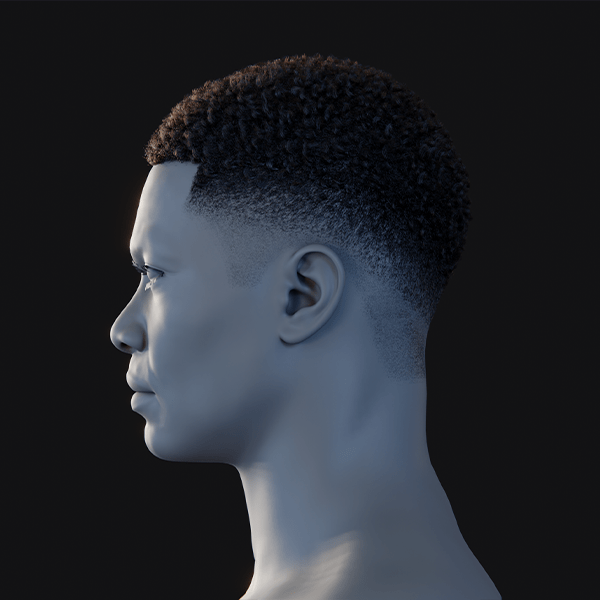

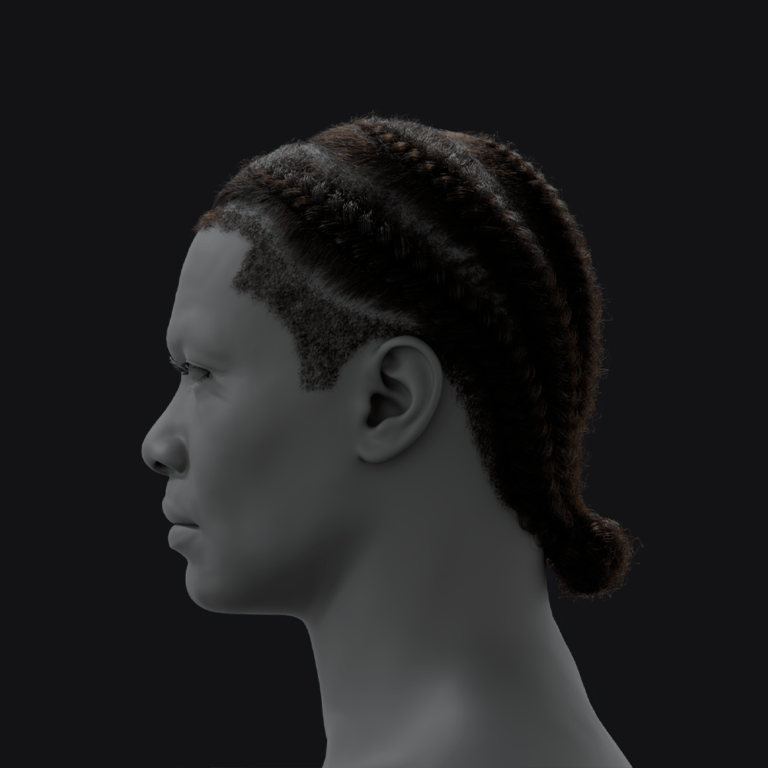

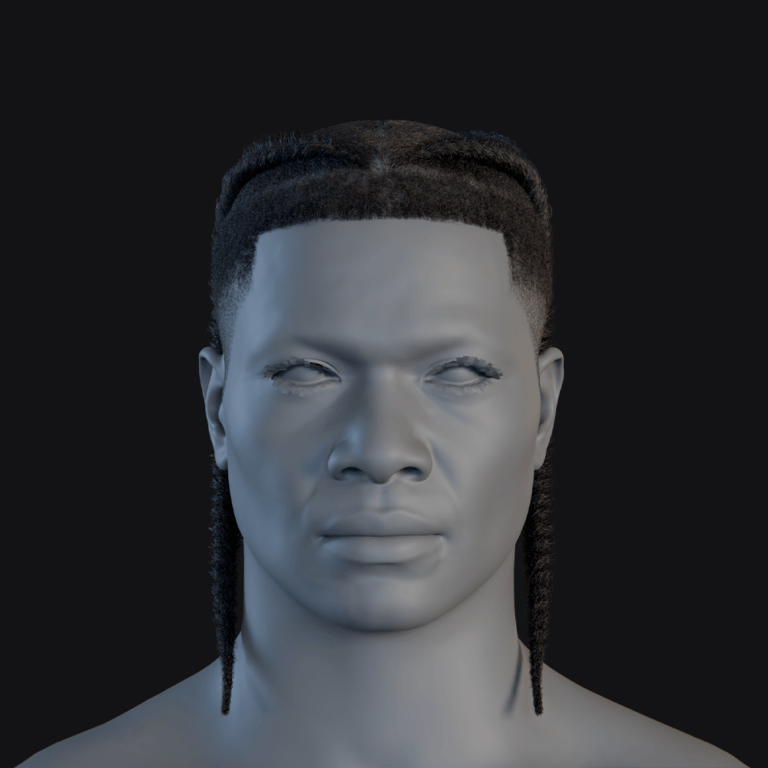

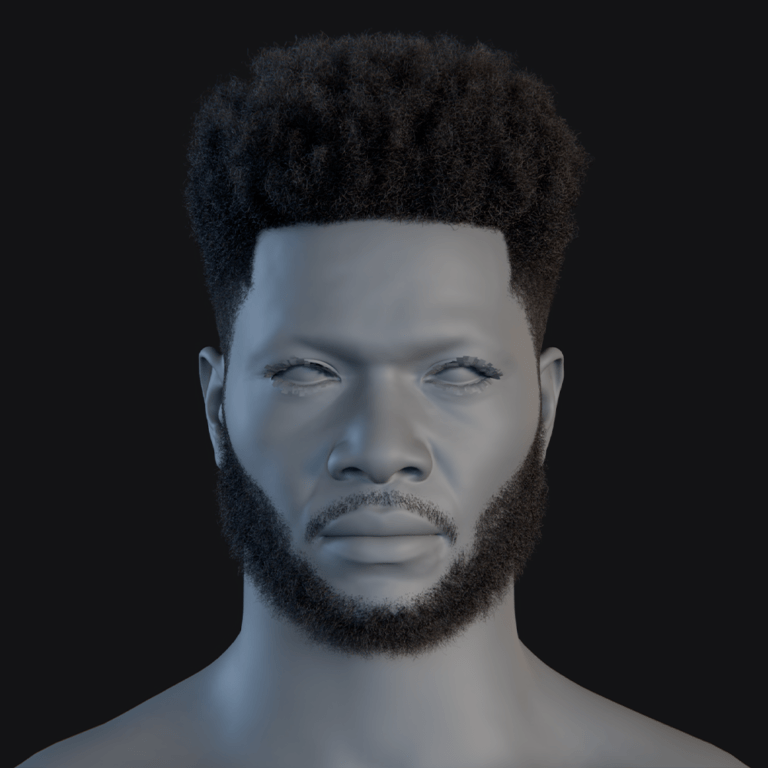

The MultiHair dataset, with 457 hairstyles, addresses diversity gaps in prior datasets by including varied shapes, textures, and cultural styles, annotated with 74 attributes. Each hairstyle has multi-view images (72 per style) and lineart, ensuring comprehensive 3D geometry capture. Text annotations, generated by GPT-4, describe attributes like length and curliness, aiding semantic learning. The dataset emphasizes underrepresented styles like afros and braids, boosting diversity by ~30%. Its limitation is the lack of ultra-high-frequency strand details due to resolution constraints.

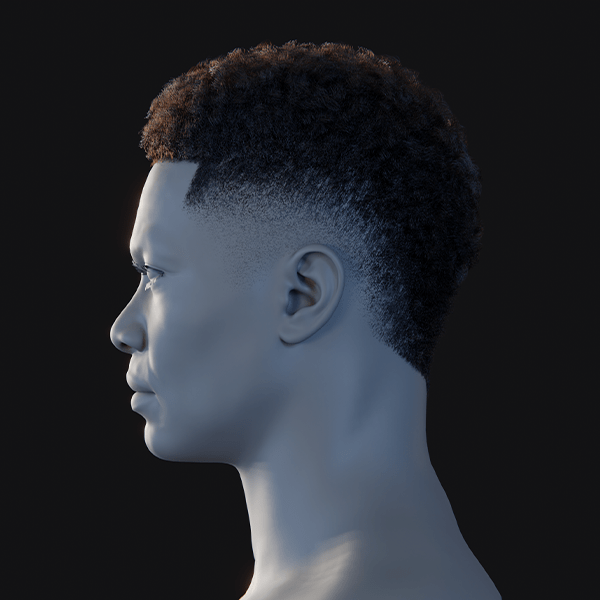

MultiHair’s multi-angle imagery, with 8 viewpoints, 3 pitch angles, and 3 focal lengths, provides robust training data for 3D hair generation. Lineart extracts structural cues, while synthetic stylized images enhance visual domain robustness. Text labels complement images, enabling the model to learn visual-semantic correspondences. Despite its strengths, MultiHair may not capture the finest micro-details in dense hair. It remains a significant improvement over older datasets with limited styles or views.

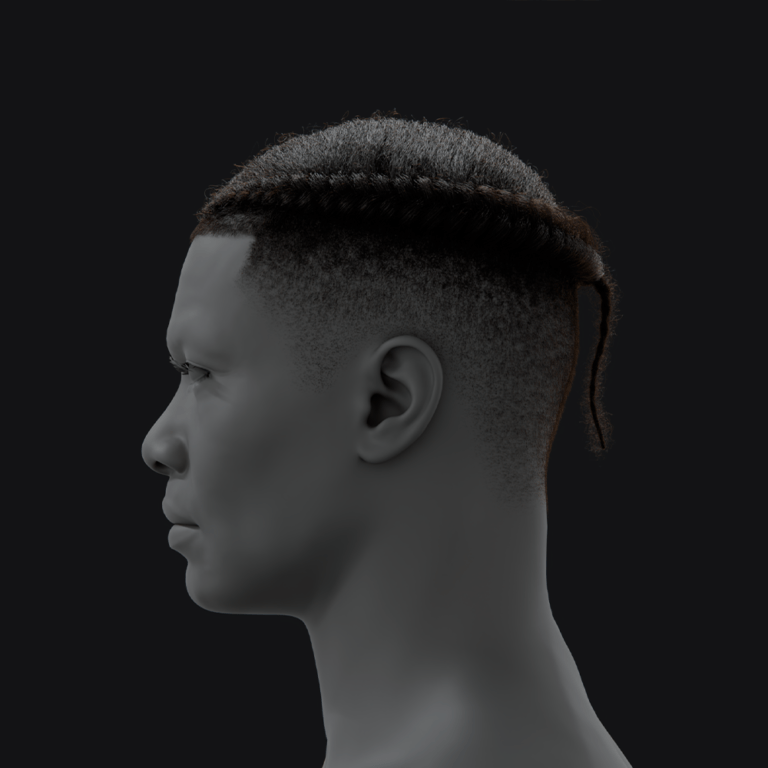

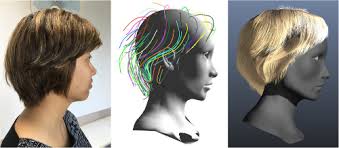

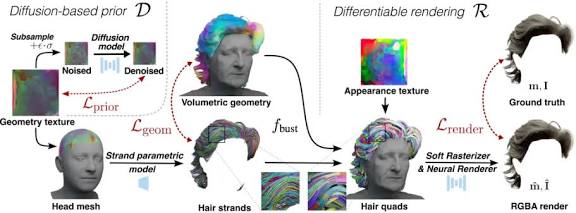

How TANGLED converts 2D pose-guided images into strand-level 3D geometry

TANGLED converts 2D images into 3D hair geometry by extracting pose-guided lineart and using a diffusion model to infer full strand fields:

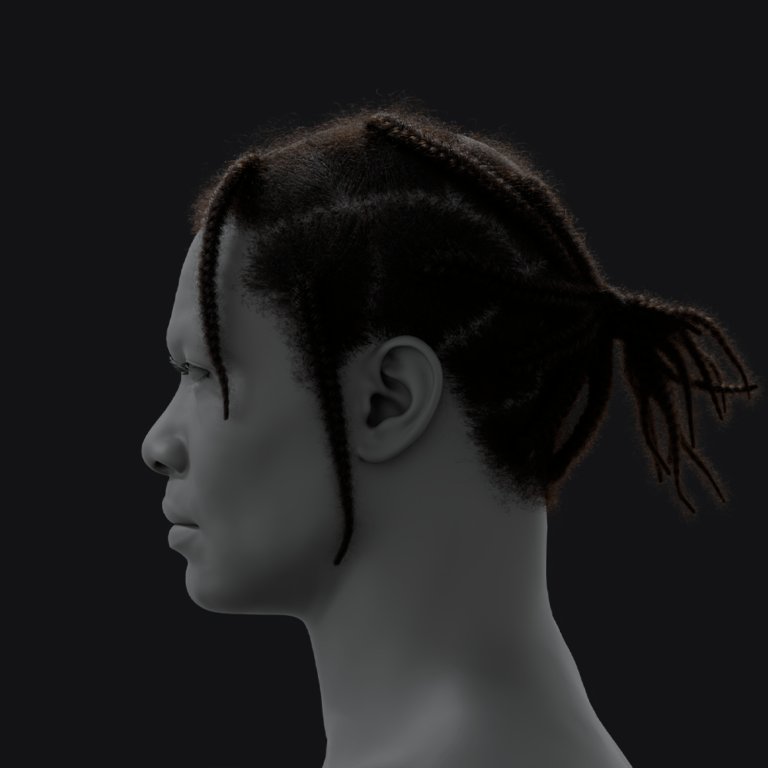

- Lineart Extraction: TANGLED processes input images to create lineart sketches, capturing hair structure without lighting or color distractions. Using tools like Grounding DINO and SAM, it segments hair and extracts contours, ensuring consistency across styles. This lineart guides the model to focus on the hair’s 2D pose and shape.

- 3D Inference via Diffusion: The diffusion model uses lineart to generate a 3D strand field, leveraging learned hair priors from MultiHair to reconstruct occluded regions. It creates a latent strand map encoding hair volume and orientation, ensuring coherence. The output is a set of 3D strand polylines matching the input’s style.

- Handling Occlusions: For partially visible hair, TANGLED relies on statistical priors to infer hidden regions, improving with multiple input views. The model resolves ambiguities by predicting plausible 3D structures based on training data. Single-view inputs may lead to less accurate reconstructions of unseen areas.

The pipeline ensures that the generated 3D hair matches the input’s structure and extends it volumetrically. This approach enables robust, view-consistent hair generation from minimal inputs.

Using Stable Diffusion in TANGLED for view-consistent 3D hair shape generation

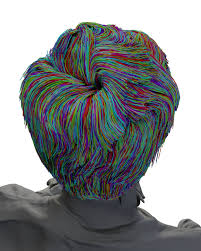

TANGLED adapts Stable Diffusion’s latent diffusion model to generate view-consistent 3D hair by operating on a latent hair map conditioned on lineart. It uses a 2D U-Net with cross-attention to iteratively denoise the latent map, ensuring high-fidelity results. Random multi-view conditioning during training teaches the model to produce hair consistent across angles, using 1 to 8 viewpoint linearts. This approach, combined with DDIM sampling (50 steps), ensures efficient, high-quality generation. Even single-view inputs yield coherent 3D hair due to learned multi-view priors.

The model’s training on varied viewpoints reduces view-dependent artifacts, ensuring the hair looks correct from all angles. TANGLED’s diffusion-based approach achieves superior geometric consistency and detail compared to prior methods.

Conditioning strategies in TANGLED: text prompts, pose data, and multi-angle image supervision

TANGLED employs multiple conditioning strategies to guide its diffusion model:

- Image-based Lineart Conditioning: Lineart from input images provides precise spatial guidance for hair structure, ensuring accurate 3D shape generation. It outperforms text-only methods by capturing detailed geometry directly from visuals. DINOv2 extracts robust features, enabling consistency across art styles.

- Multi-angle Supervision: Training with multiple viewpoint linearts ensures view-consistent hair generation, reconciling different perspectives. This reduces artifacts in unseen angles, enhancing 3D coherence. The model handles 1 to 8 views, improving accuracy with more inputs.

- Text Annotations: Text labels from MultiHair supplement semantic understanding, linking visual features to attributes like “curly” or “braided.” Though secondary at inference, they guide training to align outputs with style descriptions. Classifier-free guidance enhances robustness by balancing conditional and unconditional generation.

These strategies enable TANGLED to produce detailed, controllable 3D hair from intuitive inputs. The combination of image, multi-view, and text conditioning ensures both geometric and semantic accuracy.

Comparing TANGLED’s guidance conditioning to latent diffusion methods

TANGLED’s lineart-guided approach outperforms text-guided methods like HAAR, which relies on ambiguous text prompts often misaligned with user intent. HAAR’s need for text conversion from images introduces errors, whereas TANGLED’s direct visual conditioning preserves details like bangs and part lines. Multi-view training ensures TANGLED’s outputs are view-consistent, unlike HAAR’s single-view limitations. TANGLED achieves higher point-cloud IoU, lower Chamfer distance, and better CLIP scores, with 84.3% user preference in studies. Compared to optimization-based HairStep, TANGLED better handles varied viewpoints, producing coherent 3D geometry.

TANGLED’s visual conditioning and multi-view priors result in more accurate, realistic hair generation. Its approach bridges controllability and fidelity, making it ideal for developers needing precise 3D hair assets.

The role of text annotations in guiding hairstyle semantics across viewpoints

Text annotations in MultiHair provide consistent semantic labels across multiple views of a hairstyle, linking images to attributes like “curly ponytail.” They guide the model to recognize the same style across angles, enhancing latent encoding. CLIP scores evaluate semantic alignment, ensuring outputs match described styles. Text reinforces subtle distinctions like curl patterns or braid types, complementing visual data. While not primary at inference, annotations enable potential text-guided tweaks, improving TANGLED’s semantic accuracy across diverse hairstyles.

Text annotations ensure TANGLED captures hairstyle identity across viewpoints. They support quantitative evaluation and enhance the model’s understanding of complex hair attributes.

DINOv2 as the backbone feature extractor in TANGLED: Why vision transformers work better for hair

DINOv2, a Vision Transformer, extracts robust features from lineart, capturing hair’s global and local structures effectively. Its self-supervised training on 142 million images ensures generalizable embeddings, handling sparse sketches or stylized inputs consistently. Unlike CNNs, ViTs manage long-range dependencies, ideal for hair’s complex curves and textures. DINOv2’s features integrate via cross-attention into TANGLED’s diffusion U-Net, guiding accurate hair generation. Its versatility eliminates the need for custom hair feature training, enhancing performance across diverse inputs.

DINOv2’s transformer architecture excels at encoding hair outlines and details, ensuring stable, domain-invariant features. This makes it a critical component for TANGLED’s high-fidelity hair synthesis.

Constructing hair latent maps from visual embeddings: TANGLED’s volumetric synthesis approach

TANGLED uses a Variational Autoencoder (VAE) to create a 2D latent hair map encoding 3D strand geometry via scalp UV mapping. The VAE compresses strand polylines into a latent map, with a decoder reconstructing accurate 3D curves. Diffusion generates this map, guided by DINOv2 features, enabling volumetric hair synthesis. Loss terms ensure fidelity in strand positions, orientations, and curvature. This approach reduces 3D generation to a 2D problem, leveraging diffusion’s strengths for high-resolution output.

The latent map encodes hair volume and direction, ensuring coherent strand reconstruction. This method enables TANGLED to efficiently produce detailed, production-ready hair geometry.

Training deep neural networks for hair strand inference: architecture of the decoder pipeline

TANGLED’s decoder pipeline transforms latent hair maps into 3D strand polylines using a VAE-trained decoder and diffusion U-Net. The VAE encoder compresses strands into a latent map, optimized with losses for position, orientation, and curvature. The U-Net, guided by DINOv2 features via cross-attention, denoises the latent map over 50 DDIM steps. The decoder outputs strand curves anchored to the scalp, ensuring physical coherence. The lightweight pipeline runs efficiently on a single GPU, producing high-fidelity hair.

Training involves staged optimization of the VAE and diffusion model, ensuring accurate strand reconstruction. The architecture balances geometric detail with computational efficiency, ideal for practical applications.

DDIM sampling in TANGLED: Efficient denoising for high-fidelity strand field generation

TANGLED uses DDIM sampling with 50 steps to efficiently denoise latent hair maps, producing high-fidelity strand fields. DDIM’s deterministic path ensures reproducibility and reduces drift, maintaining conditioning accuracy. Combined with classifier-free guidance, it enhances adherence to lineart inputs, yielding detailed hair geometry. The low step count ensures fast generation without sacrificing quality, as shown in user studies. DDIM’s efficiency makes TANGLED practical for interactive content creation.

This sampling method streamlines the diffusion process, ensuring consistent, high-quality hair outputs. It supports TANGLED’s integration into real-time production pipelines.

Inference pipeline for TANGLED: converting prompt and view into geometry

TANGLED’s inference pipeline transforms input images into 3D hair geometry through structured steps:

- Pre-processing & Feature Extraction: Input images are converted to lineart, and DINOv2 extracts features, concatenated for multi-view inputs. This captures hair structure across perspectives. Text attributes, if provided, enhance semantic guidance.

- Diffuse the Hair Latent: A noisy latent map is iteratively denoised over 50 DDIM steps, guided by DINOv2 features via cross-attention. The model ensures consistency across single or multi-view inputs. The resulting latent encodes the complete hairstyle.

- Decode Geometry: The latent map is decoded into 3D strand polylines, anchored to the scalp via UV mapping. The decoder ensures accurate strand placement and shape. Outputs are compatible with CGI tools like Blender.

- Post-processing & Output: Braid regions are detected and inpainted with parametric models for topological accuracy. Minimal smoothing ensures production-ready strand curves. Outputs integrate seamlessly into animation pipelines.

The pipeline delivers fast, view-consistent 3D hair from minimal inputs. It automates hair modeling, enabling rapid asset creation for developers.

How PixelHair complements TANGLED by providing strand-accurate grooms and real-time groom assets

PixelHair enhances TANGLED by providing high-quality, strand-accurate 3D hair grooms for training and production:

- Ground Truth and Training Data: PixelHair’s artist-crafted grooms offer realistic strand data, improving TANGLED’s training with diverse styles. These assets fill gaps in MultiHair, enhancing realism in curls and braids. Training on PixelHair ensures authentic strand details and volume.

- Production-Ready Assets: PixelHair grooms are optimized for Unreal Engine and Blender, allowing TANGLED outputs to be refined with production-ready assets. They simplify integration into real-time pipelines. Developers can use PixelHair to enhance TANGLED’s generated geometry.

- Real-Time Groom Assets: PixelHair’s MetaHuman-compatible assets support real-time physics and LODs, ideal for games. TANGLED outputs can be mapped to PixelHair grooms for instant usability. This synergy accelerates workflows for dynamic applications.

PixelHair bridges TANGLED’s generative outputs to professional-grade assets. It ensures high-fidelity, simulation-ready hair for real-time and CG pipelines.

Using PixelHair to train for strand realism, symmetry, curliness and accuracy in TANGLED-generated hair

PixelHair improves TANGLED’s output quality in key areas:

- Strand Realism: PixelHair’s realistic strand details, like flyaways and tapering, enhance TANGLED’s training for authentic hair textures. Training on PixelHair’s diverse styles ensures natural strand clumping and variance. This improves TANGLED’s output realism across hair types.

- Symmetry: PixelHair’s symmetrical grooms, like twin braids, teach TANGLED to maintain balance in appropriate styles. Training with mirrored renders reinforces left-right consistency. This reduces random asymmetries in generated hair.

- Curliness: PixelHair’s curly and coily styles provide accurate curl patterns, improving TANGLED’s representation of complex textures. Training on these assets ensures realistic curl clustering and bounce. TANGLED outputs better match diverse curl types.

- Accuracy: PixelHair serves as a benchmark for geometric fidelity, allowing TANGLED to minimize errors via comparisons. Training with PixelHair’s strand data improves Chamfer distance and IoU. This ensures TANGLED’s outputs align closely with ground truth.

PixelHair enhances TANGLED’s ability to produce realistic, symmetrical, and accurate curly hair. It leverages artist-crafted grooms to elevate AI-generated outputs to production standards.

Why PixelHair offers production-quality samples for ML training

PixelHair provides high-quality hair assets ideal for training ML models like TANGLED:

- Authentic and Polished Geometry: PixelHair’s artist-crafted models offer realistic, vetted geometry for training. They replicate real hairstyles with subtle details, ensuring high-quality outputs. Training on these assets elevates TANGLED’s realism to industry standards.

- Consistency and Labeling: PixelHair’s structured style labels enable supervised learning with clear attribute mappings. This organizes training data effectively, improving style control. Consistent labeling ensures TANGLED learns precise hairstyle categories.

- Diversity with Quality: PixelHair’s diverse styles, from afros to braids, maintain high quality, broadening TANGLED’s generalization. This prevents overfitting to low-quality data. The variety supports robust training across cultural and modern hairstyles.

- Ready-made for Integration: PixelHair’s Blender-compatible assets streamline synthetic data generation with automated rendering. Clean, optimized models reduce data preparation time. This ensures efficient, high-quality training pipelines.

- Licensing for AI: PixelHair’s AI-friendly licenses allow legal use for training, supporting commercial applications. This removes barriers to using premium assets. It ensures TANGLED’s trained models are deployable without legal issues.

PixelHair’s production-quality samples enhance TANGLED’s training, ensuring realistic, reliable outputs. They provide a benchmark for professional-grade hair generation.

Evaluating hair reconstruction quality: metrics beyond Chamfer distance

TANGLED uses multiple metrics to evaluate 3D hair generation beyond Chamfer distance:

- Point Cloud IoU: Measures volume overlap between generated and ground-truth hair, ensuring correct shape capture. High IoU indicates accurate hair placement and bulk. It complements Chamfer by focusing on overall volume.

- CLIP Score or Semantic Consistency: Evaluates if rendered hair matches input style descriptions via CLIP similarity. High scores confirm semantic accuracy, like correct bangs or buns. This ensures style fidelity beyond geometry.

- Strand-level Precision/Recall: Assesses strand matching within tolerance, focusing on individual strand accuracy. It quantifies how many strands align with ground truth. This metric highlights detailed reconstruction quality.

- Visual evaluation and User Study: Human studies, with 84% preferring TANGLED’s outputs, assess realism and appeal. This captures perceptual quality not covered by numerical metrics. It validates user satisfaction with generated hair.

- Temporal or Multi-view Consistency Metrics: Measures output stability across views, ensuring low variance in reconstructions. This confirms view-consistent geometry. It’s critical for multi-angle coherence.

- Beyond Geometry – Rendered Realism Metrics: Uses vision metrics like SSIM or LPIPS to compare rendered hair realism. These assess visual plausibility against real hair images. They complement geometric evaluations for holistic quality.

These metrics ensure comprehensive evaluation of TANGLED’s hair generation. They balance geometric accuracy, semantic fidelity, and perceptual quality for robust assessment.

Training AI Models with PixelHair: Best Practices and Considerations

Best practices for training TANGLED with PixelHair include:

- Real-time inference validation: Validate TANGLED outputs in Unreal Engine or Unity to ensure real-time performance. Compare with PixelHair assets to optimize strand count and physics. This catches integration issues early.

- Synthetic data generation: Render PixelHair assets with varied lighting, angles, and styles to create diverse training data. Use style transfer to prevent overfitting to one aesthetic. This enhances TANGLED’s robustness across inputs.

- Benchmarking strand fidelity: Compare TANGLED outputs to PixelHair grooms using Chamfer and IoU metrics. Visual side-by-side comparisons identify specific errors. This iterative benchmarking improves geometric accuracy.

- Extending training sets for generalization across style and volume: Add PixelHair’s extreme styles, like high-volume afros or long ponytails, to training. Augment with synthetic variations for broader coverage. This ensures TANGLED generalizes to diverse hairstyles.

Following these practices ensures TANGLED leverages PixelHair’s high-quality assets effectively. They enhance model robustness, fidelity, and applicability in real-world scenarios.

The Role of The View Keeper in Evaluating Generated Hair Strands

The View Keeper, a Blender add-on, streamlines evaluation of TANGLED’s hair strands by managing multiple camera views. It automates rendering from preset angles, ensuring consistent multi-view inspection. This reveals inconsistencies, like thin side profiles, not visible in metrics like Chamfer. The add-on supports batch rendering for efficient dataset creation and QA. It ensures fair comparisons by standardizing viewpoints across model outputs.

By providing a 360° view of generated hair, The View Keeper verifies view-consistency. It enhances evaluation efficiency, ensuring thorough assessment of 3D hair quality.

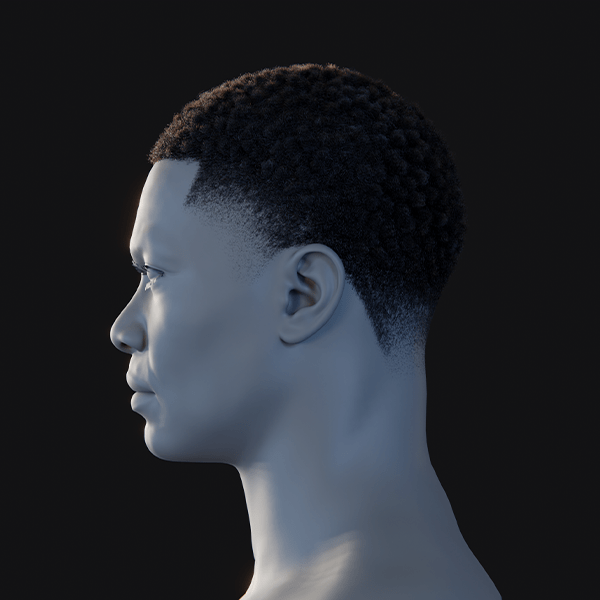

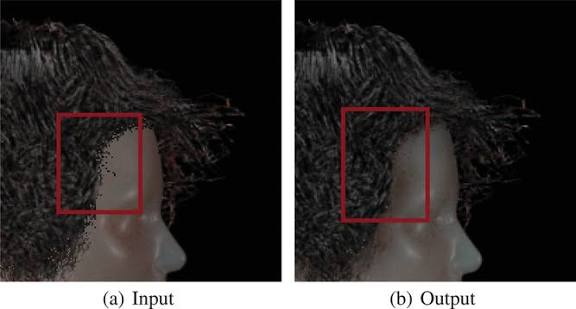

Limitations of TANGLED: failure modes on dense afro styles, occlusion-heavy inputs, and synthetic textures

TANGLED faces challenges in specific scenarios:

- Ultra-Dense and Micro-Detail Hair (e.g. dense afros): TANGLED struggles with ultra-dense afros, producing smoothed outputs due to dataset and resolution limits. Fine ringlets may appear as a fuzzy volume, missing wispy details. More diverse, high-resolution data could mitigate this.

- Occlusion-heavy inputs (extreme poses or partially visible hair): Heavy occlusions, like hidden hair or extreme angles, lead to inaccurate reconstructions. The model may generate incorrect braid anchors or generic shapes. Multiple views or better priors could improve handling.

- Synthetic or Unusual Textures: Non-natural textures, like ribbon-like or glowing hair, confuse lineart extraction, leading to poor geometry. The model interprets these as standard hair, losing unique patterns. Training on diverse textures could address this.

These limitations highlight areas for improvement, like denser datasets or occlusion handling. Future integration with tools like PixelHair may reduce these failure modes.

Future directions for TANGLED: prompt-to-groom pipelines, NeRF integration, and temporal strand coherence

TANGLED’s future enhancements include:

- Prompt-to-Groom Pipelines: Develop text-to-hair pipelines for natural language input, enabling interactive design with attribute controls. This would allow hairstyle creation without images. It could revolutionize rapid prototyping for artists.

- NeRF Integration: Integrate Neural Radiance Fields to refine hair appearance and geometry, capturing color and lighting. NeRFs could enhance occlusion handling and photorealism. This would combine TANGLED’s strands with visual fidelity.

- Temporal Strand Coherence: Ensure hair consistency across animation frames using temporal diffusion or physics integration. This prevents flickering in dynamic scenes. It enables TANGLED for video and game applications.

- Enhanced Braid and Topology Control: Incorporate algorithmic braid patterns and parting controls for precise structured hairstyles. This improves complex style generation. It enhances user control over outputs.

- Quality and Detail Improvements: Increase latent resolution and dataset size to capture ultra-fine strands and diverse styles. Adding color output could enhance realism. This addresses current limitations for broader applicability.

These directions aim to make TANGLED more user-friendly and integrated, supporting dynamic, photorealistic hair generation. They position TANGLED for advanced applications in animation and games.

Conclusion: Combining TANGLED and PixelHair for end-to-end pipelines — from prompt to production

TANGLED and PixelHair each offer powerful capabilities on their own, but together they form an end-to-end pipeline from prompt to production-ready hair assets. By combining TANGLED’s AI-driven generation with PixelHair’s library of high-quality hair models, developers can harness the best of both worlds: rapid creative exploration and dependable final asset quality. In a practical workflow, one might use TANGLED to generate a 3D hairstyle from a concept – say a quick sketch or a textual idea – instantly visualizing a new hair design.

This output gives a clear direction and can be iterated or adjusted in seconds (versus days it might take an artist to model from scratch). Once a satisfactory hair shape is achieved, PixelHair comes into play for polish and integration. The TANGLED-generated strands can be cross-referenced with PixelHair’s nearest match; perhaps the model picked up a style that resembles one of PixelHair’s offerings (e.g., a certain braid pattern). The PixelHair asset can then be used to either fully replace (if exact match is found) or guide the refinement of the generated hair – for instance, by snapping the AI strands to the PixelHair strands or transferring texture properties.

In a pipeline context, TANGLED could even be embedded as a tool within DCC software (like a Blender plugin) where an artist uses it to prototype hair, and then the plugin suggests a PixelHair asset that matches, which the artist can then apply materials to and tweak if necessary.

The result is a production-ready groom that came from an AI suggestion but is as good as a hand-crafted model. This dramatically cuts down development time for new character hairstyles in games or films. It also allows for mass customization – using AI, a game could create thousands of unique NPC hairstyles on the fly, but before shipping, those could be filtered or snapped to a set of PixelHair-approved styles to ensure consistency and quality.

Another synergy is in the training loop: using PixelHair to continuously improve TANGLED (as discussed) creates a virtuous cycle where the AI gets better and requires less touch-up. Eventually, one could foresee an automated pipeline: input a prompt (like “generate 50 variations of fantasy elf hairstyles”), have TANGLED produce them, automatically match each to a PixelHair groom or refine them to PixelHair quality, and output directly to an Unreal Engine asset library. This pipeline from prompt to production would have been almost unthinkable years ago – hair was too labor-intensive and specialized – but combining generative AI and curated assets makes it feasible.

Frequently Asked Questions (FAQs)

- What inputs does TANGLED require to generate a 3D hairstyle?

TANGLED generates a 3D hairstyle primarily from one or more 2D images, such as photos, sketches, or artwork, with multiple angles improving accuracy. It does not require a text prompt, relying on visual input to produce a 3D hair model. The training data includes text descriptors for semantics, but the current pipeline is image-driven, with potential future support for text-based generation. - How does TANGLED ensure the generated hair is consistent from all views?

TANGLED ensures view-consistent 3D hair by training on multiple simultaneous views of hairstyles, using a latent diffusion model conditioned on line drawings from various angles. It generates a coherent 3D representation that looks plausible from any angle, even from a single input view, by leveraging learned priors. When multiple views are provided, it merges features to satisfy each viewpoint, ensuring no missing patches or incorrect geometry. - What exactly is the MultiHair dataset and can I get access to it?

MultiHair is a custom dataset of 457 diverse hairstyles, including braids, curls, and updos, with up to 72 multi-angle photos/renders per style, line art, and textual annotations. It was critical for training TANGLED but is not confirmed as publicly available; it may be accessible later via the authors’ project page or upon request. Without it, retraining TANGLED would require a similar dataset of 3D hair models and multi-view images. - Does TANGLED require specialized hardware or a cluster to run?

TANGLED’s training was completed on a single NVIDIA RTX 3090 GPU over 10 days, and inference takes seconds to minutes on a similar GPU with 24 GB VRAM. No supercomputer or multi-GPU setup is needed, as the diffusion model runs efficiently with optimizations like DDIM and half-precision. A high-end consumer GPU is sufficient for generating hairstyles, such as for game characters, without distributed training. - How does PixelHair integration work – do I train TANGLED on PixelHair, or use PixelHair after generation?

PixelHair can enhance TANGLED by providing rendered images for training to improve realism or by refining/replacing generated hair post-inference for production quality. During training, PixelHair’s 3D models augment the dataset, while post-generation, its assets can be swapped in or used to adjust strand texture and placement. Integration depends on the pipeline, serving as data augmentation or a manual/automated handoff for content creation. - Can TANGLED handle different types of hair, like very curly or braided hair?

TANGLED handles diverse hair types, including curly, coiled, and braided styles like afros, dreadlocks, and cornrows, trained on the varied MultiHair dataset. A braid inpainting module ensures coherent braid structures, and it performs well on volumetric curls, though extremely detailed styles may be simplified. User studies show it outperforms prior models, managing most hair types effectively despite some limitations with highly intricate styles. - What format is the output hair model in, and how do I use it in Blender or Unreal?

TANGLED outputs 3D polylines, exportable as .FBX, Alembic, or similar formats, with Alembic recommended for hair curves. In Blender, these curves serve as hair guides for particle hair systems, while in Unreal, they import as groom assets for the Groom system. The strands integrate into standard CG pipelines, requiring minor alignment with scalp meshes for rendering or simulation with appropriate shaders. - How is TANGLED different from using just a photogrammetry or multi-view stereo approach to capture hair?

Unlike photogrammetry, which struggles with hair’s translucency and thinness, TANGLED uses a learned prior to generate 3D hair from minimal input, inferring internal strands. It produces explicit strand curves from even a single image, unlike the noisy point clouds of stereo methods, offering cleaner, semantically meaningful output. While photogrammetry aims for exact reconstruction, TANGLED’s generative approach better handles hair’s complexities, though it may slightly deviate from the input. - Is TANGLED’s output suitable for animation – can the hair move?

TANGLED outputs static 3D strands suitable for animation when used with physics engines like Blender’s hair dynamics or Unreal’s Chaos groom system. The strand geometry supports simulation as soft bodies or ropes, but TANGLED itself does not generate motion, requiring separate simulation tools. Minor cleanup may ensure simulation-readiness, but the output is compatible with standard hair simulation workflows, similar to artist-created grooms. - How does TANGLED compare with existing hair modeling tools or plugins?

TANGLED automates hair generation from references, unlike manual tools like XGen or Blender’s particle hair, which require time-intensive grooming. It generates complex styles faster than traditional methods, though expert artists may achieve more tailored results manually, and complements tools by providing a base model for refinement. Compared to other AI tools like HairGAN, TANGLED offers superior accuracy and explicit strands, enabling unique style creation beyond presets like MetaHuman’s.

Conclusion

TANGLED represents a significant leap forward in automating 3D hair generation, demonstrating that diffusion models combined with rich datasets can tackle one of the most complex aspects of digital humans. By integrating this AI approach with resources like PixelHair and tools like The View Keeper, developers can achieve a pipeline that is both creative and reliable – dramatically cutting down the time to create realistic hair while maintaining artistic quality.

While challenges remain in handling the most extreme hairstyles and ensuring perfect detail, the progress is undeniable. The synergy of learned models and curated assets hints at a future where designing a digital character’s hair could be as easy as describing it or providing a reference photo, with the AI doing the heavy lifting and experts fine-tuning the final touches. In conclusion, the combination of TANGLED’s generative power and PixelHair’s realism paves the way for faster, more inclusive, and innovative hair creation in 3D, bringing us closer to truly lifelike virtual characters across games, films, and the metaverse.

sources and citation

- Pengyu Long et al. (2025). TANGLED: Generating 3D Hair Strands from Images with Arbitrary Styles and Viewpoints.

- MultiHair Dataset – TANGLED paper, Sec.3 Dataset Construction, detailing multi-view renders and annotations.

- link- TANGLED — Sec. 3 Dataset Construction

- source- arXiv.

- TANGLED paper, Sec.4.1, describing the latent diffusion model, DINOv2 feature conditioning, and UV mapped hair latent.

- link- TANGLED — Sec. 4.1 Hair Strands Latent Diffusion Model

- source- arXiv.

- TANGLED paper, Sec.4.2, on multi-view training and inference support for arbitrary viewpoints and multi-image input.

- link- TANGLED — Sec. 4.2 Multi-view Lineart Conditioning

- source- arXiv.

- TANGLED paper, Sec.5, comparison between TANGLED and HAAR, highlighting semantic alignment issues in text-based HAAR and user study preferences.

- link- TANGLED — Sec. 5 Results

- source- arXiv.

- TANGLED paper, Sec.5.3 Evaluation, listing metrics used (Point Cloud IoU, CLIP score, Chamfer Distance) for hair quality assessment.

- link- TANGLED — Sec. 5.3 Evaluation

- source- arXiv.

- TANGLED paper, Sec.6 Discussion, noting current limitations (strand detail resolution, extreme pose braid issues, pixel-level alignment limits).

- link- TANGLED — Sec. 6 Discussions

- source- arXiv.

- Yelzkizi – PixelHair 3D Hair Assets page, describing the realism and range of PixelHair’s ready-made hairstyles and suitability for AI training data.

- link- PixelHair 3D Hair Assets ; AI Training License Agreement for PixelHair

- source- Yelzkizi.

- Yelzkizi – PixelHair categories, showing the breadth of hair types (Braids, Curls/Afro, Dreads, etc.) available, underscoring dataset diversity for training.

- link- Pixel Hair ; Braids ; Curls/Afro ; Dreads

- source- Yelzkizi.

- Yelzkizi – The View Keeper breakdown, highlighting the ability to render multiple camera angles simultaneously for efficient multi-view evaluation.

- link- Can you animate multiple cameras in one Blender project?

- source- Yelzkizi.

- Yelzkizi – The View Keeper, discussing the challenges of manual multi-camera management that the add-on solves (repetitive adjustments, errors).

- link- Blender 3D Camera Mastery With The View Keeper

- source- Yelzkizi.

- DINOv2 GitHub README, stating that DINOv2 produces high-performance, robust visual features usable across domains without fine-tuning – rationale for its use as backbone.

- link- DINOv2 README

- source- GitHub (facebookresearch/dinov2).

Recommended

- How do I lock a camera to an object in Blender?

- How to Get the Perfect Hair Roughness for a Realistic Look

- Cyberpunk 2077 Hair Mods: Top Custom Hairstyles, Installation Guides, and Community Favorites

- How to Make a 3D VTuber Avatar: The Complete Guide for Beginners and Content Creators

- How to Use Mesh to Metahuman for AAA Digital Doubles in Unreal Engine 5

- Simplifying Camera Control in Blender with The View Keeper Plugin

- Metahuman and NVIDIA Omniverse Audio2Face : Create Real-Time Facial Animation for Unreal Engine 5

- Achieving a Painterly Look in Blender: Techniques to Make 3D Animations Appear Hand-Painted

- ALS Metahuman Motion Matching: How to Combine Realistic Movement and Character Animation in Unreal Engine 5

- How do I smooth camera motion in Blender animations?