In early April 2026, the Academy Software Foundation welcomed OpenPGL (Open Path Guiding Library) as a hosted project, starting in the ASWF “Sandbox” stage—a governance move designed to keep a widely-adopted path guiding library alive and evolving after its original corporate stewardship ended.

What is OpenPGL (Open Path Guiding Library)

OpenPGL is an open-source library intended to make “path guiding” practical to integrate into path tracing renderers, by providing implemented guiding data structures and training algorithms rather than leaving every renderer team to re-implement these components independently.

At a technical level, the library learns a “guiding field” during rendering (using radiance/importance samples generated while rendering), then allows a renderer to query a local distribution at path vertices to guide sampling decisions—especially direction sampling on surfaces and in volumes.

OpenPGL exposes both a C API (and a C++ wrapper), and positions itself as production-minded software: it aims to be robust, well-tested, and reusable across many renderers rather than being bound to a single engine or studio pipeline.

Academy Software Foundation OpenPGL adoption explained

The ASWF announcement (published April 8, 2026) frames OpenPGL’s value proposition in industry terms: path guiding improves rendering efficiency by using information about scene light distribution to make better importance sampling decisions, but production-grade implementations are hard to build and maintain internally—OpenPGL exists to lower that barrier.

A key reason the adoption matters is simple continuity: OpenPGL had meaningful real-world uptake (in multiple major renderers), yet its long-term development became uncertain after Intel stopped active work; ASWF hosting provides a neutral, community-driven framework for continued maintenance and evolution.

The ASWF announcement also includes endorsements from production users (including studios and renderer vendors) that emphasise two recurring themes: measurable efficiency gains in complex lighting, and reduced integration/maintenance burden thanks to a “simple API” and shared tooling.

OpenPGL ASWF Sandbox project meaning and next steps

In ASWF’s project lifecycle, “Sandbox” is the earliest hosted stage: it is explicitly intended to provide structure and resources for young or transitioning projects, with OpenPGL’s stated aim of reaching “Incubation” within about a year.

Operationally, the ASWF announcement states that OpenPGL’s ongoing development will be guided by a Technical Steering Committee led by maintainer Sebastian Herholz, and it points contributors toward the project’s community channels (including an ASWF Slack channel).

ASWF’s Technical Advisory Council publishes an annual review process for hosted projects, and its published schedule lists “Open Path Guiding Library (OpenPGL)” as Sandbox, with an initial acceptance date (2026‑01‑12) and a next review date (2026‑12‑09).

What is path guiding in path tracing renderers

Path guiding is a family of adaptive variance-reduction techniques for path tracing: rather than sampling directions (or other decisions) only from “static” heuristics (for example, BSDF importance sampling and direct light sampling), a path guiding system learns from previously traced paths to build a better proposal distribution—closer to the scene’s actual light transport—then uses it to guide future samples.

Industry course notes for SIGGRAPH 2025 summarise why the idea is attractive in production: over the past decade, guiding algorithms have moved from research into production renderers because they can improve the efficiency of difficult effects like complex indirect illumination, caustics, volumetric multi-scattering, and heavily occluded direct lighting—without requiring a fundamentally different renderer architecture than a path tracer.

That said, path guiding is not a single algorithm. It is an approach space with many possible representations (trees, parametric mixtures, hybrid schemes) and many “integration details” that affect robustness and correctness (for example, how guiding interacts with next-event estimation and multiple importance sampling).

How OpenPGL reduces noise and speeds up render convergence

OpenPGL’s core mechanism is learning-and-querying: it learns an approximation to the scene’s light distribution during rendering, then uses that approximation to guide new sampling decisions toward directions that are more likely to contribute strongly to the rendered image.

The OpenPGL repository describes this in renderer-facing terms: a guiding field is updated on a per-frame basis using samples collected during rendering, and at each vertex along a random path, the field can be queried to guide local choices (notably direction sampling).

This process targets classic “wasted samples” situations in path tracing—cases where many samples contribute near-zero energy because light is hard to discover (e.g., small bright regions, heavily indirect interiors, or multi-scattered volumes). Intel’s original technical explainer states that this optimisation of “exploration of complex light transport” leads to substantial noise reduction, enabling similar realism at the same or better performance.

Practical usage guidance from production renderers also stresses an important nuance: enabling path guiding can introduce overhead and its payoff is scene-dependent. For example, Chaos notes that path guiding can significantly improve render times in some scenes and offer only minor benefit in others—especially if GI/reflections are not the dominant noise source.

OpenPGL vs other path guiding techniques: what’s different

OpenPGL is best understood as a shared implementation platform rather than a single new guiding paper: it aims to package multiple state-of-the-art guiding representations and training methods into a reusable library, so renderer and studio teams converge on common infrastructure instead of diverging into incompatible in-house implementations.

Compared to single-method academic systems, OpenPGL’s differentiator is breadth and production hardening. The ASWF project proposal explicitly describes OpenPGL as offering multiple representations—including directional quad-trees and “parallax-aware von Mises-Fisher mixtures”—plus fitting algorithms designed to learn scene light distribution during rendering in a way that is workable for production constraints.

For contrast, a widely-cited guiding approach from 2017 (“Practical Path Guiding”) proposes an SD-tree: a spatial binary tree combined with a directional quadtree, built to store and sample incident radiance; its design is presented as robust and unbiased, with an explicit training-vs-render-time budgeting strategy.

Likewise, SIGGRAPH 2020 work on parallax-aware mixtures highlights a different axis of improvement: a parametric mixture representation that remains accurate across larger spatial regions via parallax-aware warping, and a robust fitting/update scheme that supports incremental training—while also addressing practical integration challenges like smoothly combining next-event estimation with guiding.

In OpenPGL’s own repository description, currently supported guiding modes include guiding directional sampling on surfaces and in volumes based on learned incident radiance (or its product with simpler BSDF/phase-function components such as cosine lobes or a single-lobe Henyey–Greenstein phase). That scope signals a pragmatic focus: improve the proposal distribution used for local sampling decisions, and leave high-level renderer policy (MIS strategy, feature toggles, production constraints) to the integrating renderer.

Why Intel stopped development of OpenPGL and what happens now

OpenPGL was initiated at Intel in 2022 as part of Intel’s oneAPI rendering toolkit ecosystem, and it was positioned from the beginning as an open-source effort to accelerate adoption of path guiding beyond proprietary renderers.

By late 2025, Intel had stopped development due to “restructuring and shifting priorities,” according to the ASWF project proposal materials referenced by coverage of the transition, and echoed in downstream reporting about Intel’s broader 2025 cost-cutting and open-source pullbacks.

The practical outcome is not merely a rebrand: in ASWF’s model, the project shifts to foundation governance, with ongoing technical direction through a Technical Steering Committee and a broader contributor base spanning vendors and studios already using the library.

OpenPGL in Blender Cycles: current status and benefits

OpenPGL has been adopted and integrated into Blender’s Cycles renderer, according to ASWF’s announcement of the project move.

Intel’s original OpenPGL technical article also describes an integration effort with Cycles as a key early validation path for the library, used to demonstrate noise reduction in difficult indirect illumination and related scenarios.

From an implementation standpoint, OpenPGL is heavily CPU-oriented today (explicitly describing optimisation for SIMD instruction sets such as SSE/AVX families in its README), and the ASWF proposal frames GPU ports as work-in-progress rather than feature-complete. This combination is a useful mental model for “current status”: OpenPGL-based guiding in production is mature enough to ship on CPU, while cross-platform GPU guiding remains an active development frontier.

OpenPGL in V-Ray: path guiding workflow improvements

Chaos documents its integration of OpenPGL as an experimental feature (in V-Ray 6.1), describing the practical workflow as “train during light cache building, then reuse that learned distribution for final rendering.” This is significant because it makes guiding usable in both progressive and bucket workflows once the light cache is computed.

In that integration description, path guiding is framed as improving sampling for both diffuse indirect illumination (GI) and glossy reflections, and it is also described as beneficial for indirect illumination in volumes; Chaos further notes that increasing light cache subdivs can improve guiding effectiveness by providing more training data during the prepass.

Chaos also explicitly cautions that results are scene-dependent: the feature can substantially improve render times for large-indirect-illumination scenes, while offering limited gains when the guiding overhead is not offset by reduced noise.

OpenPGL in Houdini Karma: production rendering implications

ASWF’s announcement lists SideFX’s Karma among the renderers that have adopted and integrated OpenPGL.

Independently, SideFX release notes for Karma describe the addition of “path guiding” as a feature aimed at improving indirect sampling in difficult lighting situations, including caustics and predominantly indirect lighting—precisely the class of scenes where path guiding tends to deliver the biggest convergence improvements.

A later SideFX “What’s new” entry for Karma CPU describes an explicit “indirect guiding” improvement: it is presented as addressing heavy noise in true caustics/dispersion/occlusion scenarios and is described as being able to find paths through windows or water surfaces with true caustics; it is enabled via a Karma Render Settings control (“Enable Indirect Guiding” under Advanced ▸ Sampling ▸ Secondary Samples).

Taken together, the production implication is clear even without assuming exact internal wiring: Karma’s sampling controls are evolving toward more robust guided sampling for hard light transport, and OpenPGL’s stated mission (shared, production-ready guiding infrastructure) aligns directly with these goals.

Disney Hyperion OpenPGL path guiding system overview

ASWF’s announcement contains a joint studio statement saying OpenPGL is an important building block in the “second generation path guiding system” of Walt Disney Animation Studios’ Hyperion renderer, emphasising a “rich set of tools” and a “simple API” that helped simplify implementation and iterate on production challenges.

That same statement asserts that a new path guiding system using OpenPGL was an “invaluable tool” in production of Zootopia 2, including on technically challenging shots involving difficult lighting setups and complex volumetrics.

A public SIGGRAPH 2025 course chapter (“Path Guiding Surfaces and Volumes in Disney’s Hyperion Renderer: A Case Study”) describes the Hyperion effort as a second-generation guiding system for surfaces and volumes, focusing on the engineering challenges of integrating path guiding into a wavefront-style path tracer, along with debugging/visualisation tools and production test results.

Additional public commentary by Yining Karl Li describes the next-generation Hyperion guiding system as being “built on top of” OpenPGL, explicitly tying the production system’s core to the shared library approach.

OpenPGL license and governance under ASWF

OpenPGL is released under the Apache 2.0 licence, both in its GitHub repository metadata and in the ASWF project proposal documentation.

Under ASWF hosting, OpenPGL’s development is stated to be guided by a Technical Steering Committee led by maintainer Sebastian Herholz, and the project is intended to operate with open community participation (issues, pull requests, and community discussion channels).

The ASWF proposal also documents practical governance-adjacent details that matter for adopters: OpenPGL uses GitHub for source control and issue tracking; contributions are expected to come through PR-based review; and the project has explicitly identified key dependencies (e.g., oneTBB, Embree source components, optional Open Image Denoise features, and NanoFlann) with their respective licences.

OpenPGL roadmap and community contribution opportunities

The most concrete near-term “roadmap” item under ASWF is lifecycle progression: OpenPGL is starting as a Sandbox project, with an explicit stated goal of graduating to Incubation within a year, and an annual review scheduled for December 9, 2026.

From a technical roadmap perspective, two signals are especially actionable for contributors. First, the ASWF proposal describes GPU ports (CUDA, SYCL, HIP, Metal) as “work in progress,” indicating an open area where community effort could materially change the library’s applicability—particularly for GPU-first production renderers.

Second, the public GitHub issue list shows active requests and engineering topics that map to real adopter needs, including API/serialisation improvements, build portability (e.g., RISC‑V Linux build discussions), and explicit community questions about GPU timelines.

For developer participation, ASWF’s announcement points to project communication channels (including Slack) and positions ASWF hosting as a way for studios, vendors, and researchers to collaborate on a shared path guiding infrastructure rather than diverging into isolated implementations.

OpenPGL GitHub repo and how to build OpenPGL from source

OpenPGL’s repository documentation identifies the published release line (for example, v0.7.1) and emphasises that the project remains pre‑v1.0, with an API that may change across releases—important context for any production integration plan.

The README also documents standard build prerequisites (CMake and a C++ compiler toolchain) and offers a “CMake Superbuild” that fetches dependencies and produces an install directory containing OpenPGL and its dependency stack.

A minimal superbuild flow, mirroring the project’s README, is:

bashCopygit clone https://github.com/RenderKit/openpgl.git

cd openpgl

mkdir build

cd build

cmake ../superbuild

cmake --build .

For CMake-based consumers, the README describes integrating via the generated CMake configuration, typically by setting openpgl_DIR to the installed package config location (e.g., [openpgl_install]/lib/cmake/openpgl-0.7.1).

How to integrate OpenPGL into a custom renderer

OpenPGL’s integration philosophy is to decouple “renderer wiring” from “guiding internals”: the renderer provides samples and queries distributions during path generation, while OpenPGL owns the data structures and training algorithms behind those distributions.

At the API level, the library provides two surfaces: a C99-conform C API (via openpgl/openpgl.h) and a C++ wrapper (via openpgl/cpp/OpenPGL.h). This makes it possible to integrate guiding either into C or C++ renderers (or expose guiding through an internal C boundary in larger systems).

A production-grade integration plan typically has to address five practical requirements, each reflected in public production guidance about integrating guiding systems:

- First, define where guiding is queried in the path tracer (usually at each shading vertex where a new direction is sampled), and define what quantity is being learned (incident radiance/importance) so that the learned distribution is meaningful for the renderer’s estimator.

- Second, ensure guiding plays correctly with the renderer’s existing sampling strategies. Production course notes emphasise that implementers must consider “nitty-gritty” integration details that are often absent from papers, and SIGGRAPH 2020 work on parallax-aware mixtures explicitly discusses integrating next-event estimation smoothly to avoid wasting samples on contributions better handled by NEE.

- Third, decide the training regime: online updates during progressive rendering vs structured prepasses. Chaos describes a concrete production approach (training during a light cache prepass) that allows guiding to benefit both progressive and bucket workflows.

- Fourth, architect for performance and determinism. OpenPGL is designed around CPU SIMD optimisation and multi-threaded construction/training, and its production users highlight that integration can be “straightforward” but still needs careful engineering around robust behaviour and interaction with renderer features.

- Fifth, plan for versioning and API change. The OpenPGL README explicitly warns the library is pre‑v1.0 and the API may change; in practice, this implies pinning versions, building regression scenes, and treating updates as engineering work rather than “drop-in” swaps.

Frequently Asked Questions (FAQs)

- Is OpenPGL free to use in commercial renderers?

OpenPGL is published under the Apache 2.0 licence, a permissive licence commonly used for commercial and open-source software, and is explicitly described as Apache 2.0 in both the repository documentation and the ASWF proposal materials. - Does OpenPGL replace a renderer’s denoiser?

No. OpenPGL targets sampling efficiency (variance reduction) by improving how paths are sampled; denoising is a separate class of technique that improves the final image by filtering noise. OpenPGL is described as improving sampling quality and reducing noise generation by better exploring complex light transport. - Is path guiding always faster than standard path tracing?

Not always. Production guidance explicitly notes scene dependence and overhead: in some scenes guiding improves render times significantly; in others it helps less if the guiding overhead is not offset by reduced variance. - What kinds of scenes benefit most from OpenPGL-style guiding?

Sources consistently emphasise difficult light transport scenarios: complex indirect illumination, volumes with multi-scattering, occluded lighting, and some caustic-like cases—exactly the situations referenced in production course abstracts and renderer vendor documentation. - Does OpenPGL support volumes as well as surfaces?

Yes: OpenPGL’s repository and Intel’s technical description both mention guiding paths interacting with surfaces and volumes, and the ASWF proposal describes OpenPGL’s goal of supporting production-guiding needs across such scenarios. - Is OpenPGL GPU-accelerated today?

The ASWF project proposal describes OpenPGL as highly optimised for x86 and ARM CPUs using SIMD vectorisation and describes GPU ports (CUDA, SYCL, HIP, Metal) as “work in progress,” rather than presenting them as fully shipped targets. - What does “ASWF Sandbox” mean for production users right now?

Sandbox status indicates the project is in the earliest ASWF lifecycle stage, gaining a governance and support framework with the stated goal of progressing toward Incubation, plus scheduled annual review checkpoints that assess maturity and sustainability. - Where is OpenPGL already used in production?

ASWF’s announcement lists adopter renderers including Cycles, V-Ray, Karma, and Hyperion; the SIGGRAPH 2025 course site similarly lists multiple production renderers in which guiding integrations are discussed. - What is the safest way to adopt OpenPGL in a pipeline?

The OpenPGL README warns that the API is pre‑v1.0 and may change; production guidance therefore implies version pinning, regression testing on representative scenes, and controlled upgrade cycles rather than unpinned “latest” adoption. - How can developers contribute after the ASWF move?

ASWF points contributors to project communication channels (including Slack), while the ASWF proposal documents GitHub-based development workflows (issues and PRs). The public GitHub issue tracker provides a concrete backlog of contribution opportunities.

Conclusion

OpenPGL’s move into the Academy Software Foundation as a Sandbox project formalises a shift from single-vendor stewardship to open governance, while preserving and expanding a pragmatic, production-facing path guiding codebase already embedded in multiple major renderers.

Technically, the value proposition remains consistent across sources: learned guiding distributions can reduce variance in the difficult corners of path tracing (complex indirect lighting, volumes, occluded illumination and certain caustic-like effects), improving convergence—often dramatically—when scenes match those characteristics, but with overhead and scene dependence that production integrations must manage.

Sources and Citations

- Academy Software Foundation – “OpenPGL Becomes an Academy Software Foundation Project”

https://www.aswf.io/news/openpgl-becomes-an-academy-software-foundation-project/ - CG Channel – “Academy Software Foundation adopts OpenPGL”

https://www.cgchannel.com/2026/04/aswf-adopts-openpgl-after-intel-ends-development/ - Academy Software Foundation TAC Proposal – “OpenPGL project proposal (scope, license, dependencies)”

https://github.com/AcademySoftwareFoundation/tac/issues/1218 - OpenPGL GitHub Repository – Documentation and build instructions

https://github.com/openpathguidinglibrary/openpgl - Intel Developer Article – “Open Path Guided Rendering Made Easier”

https://www.intel.com/content/www/us/en/developer/articles/technical/open-path-guided-rendering-made-easier.html - Chaos (V-Ray) Documentation – Path guiding / OpenPGL integration details

https://docs.chaos.com/display/VRAY/Path+Guiding - SideFX Documentation – Karma rendering and path guiding / indirect guiding updates

https://www.sidefx.com/docs/houdini/karma/path_guiding.html - SIGGRAPH 2025 Course – “Path Guiding in Production and Recent Advancements”

https://www.yiningkarlli.com/projects/pathguidingcourse2025/siggraph2025_pathguidinginproduction_abstract.pdf - Digital Production – “OpenPGL joins ASWF (governance, integrations, production usage)”

https://digitalproduction.com/2026/04/09/openpgl-joins-aswf/

Recommended

- Can You Use Perspective and Orthographic Cameras in Blender?

- OptiX vs CUDA: Comprehensive Comparison of NVIDIA’s Rendering Technologies

- 28 Years Later: The Bone Temple Is Coming to Netflix on March 31, 2026 in the US: Release Date, Cast, Plot, and Streaming Details

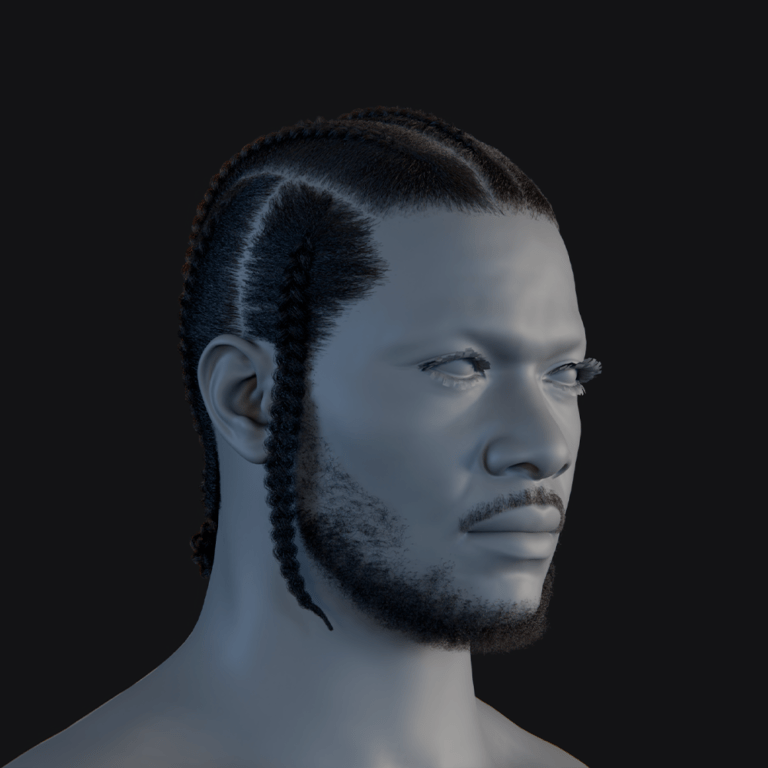

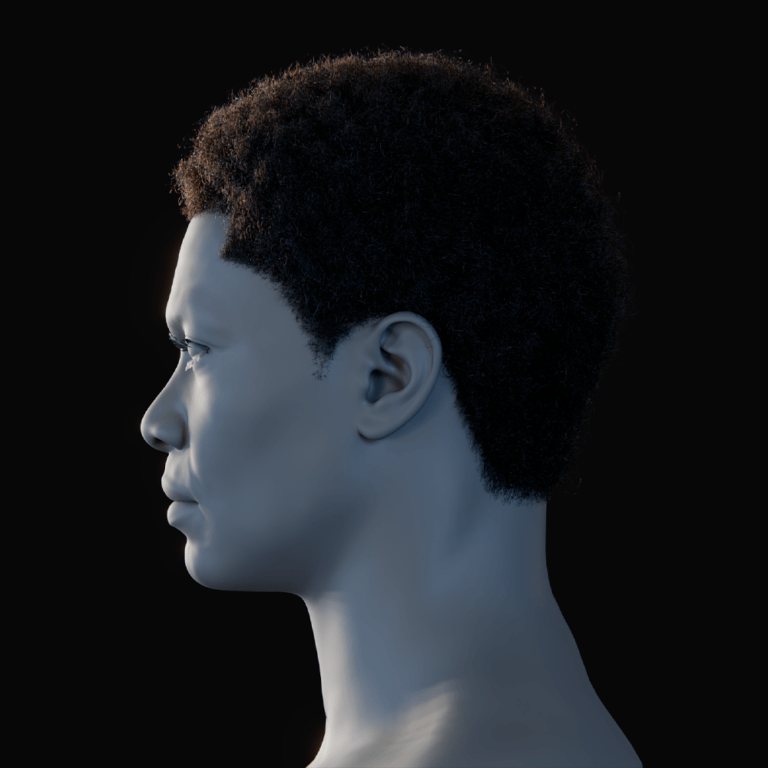

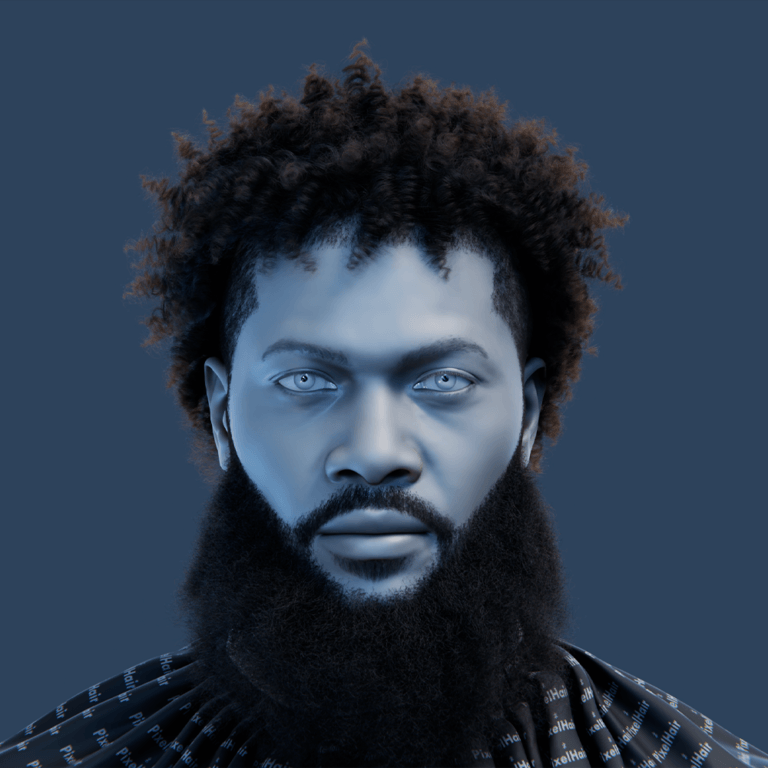

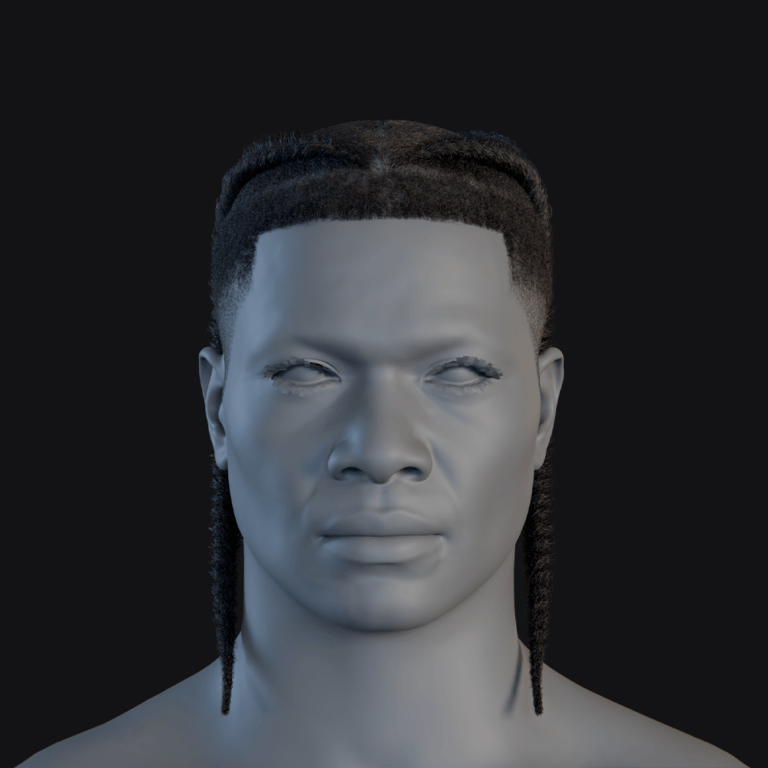

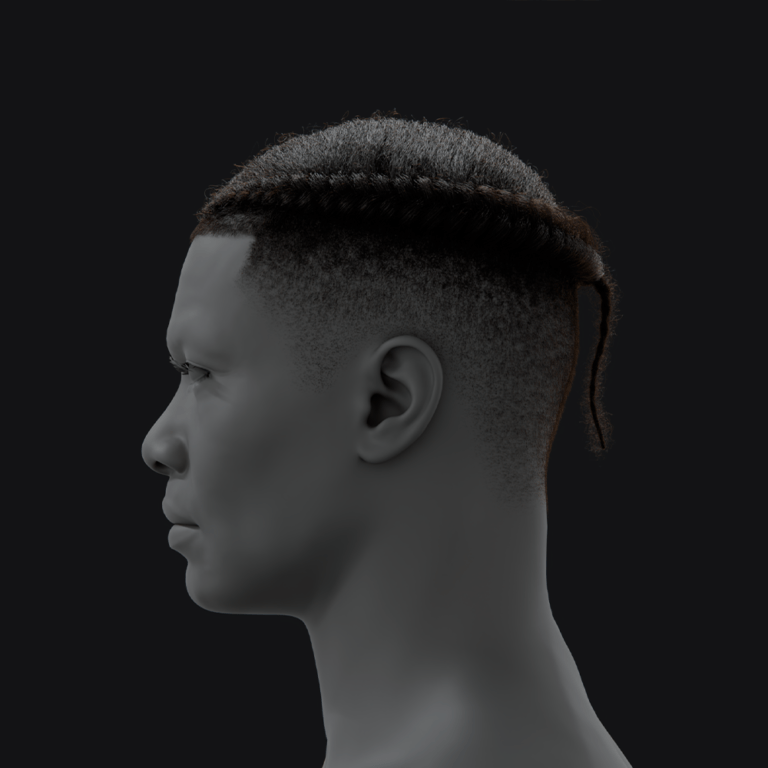

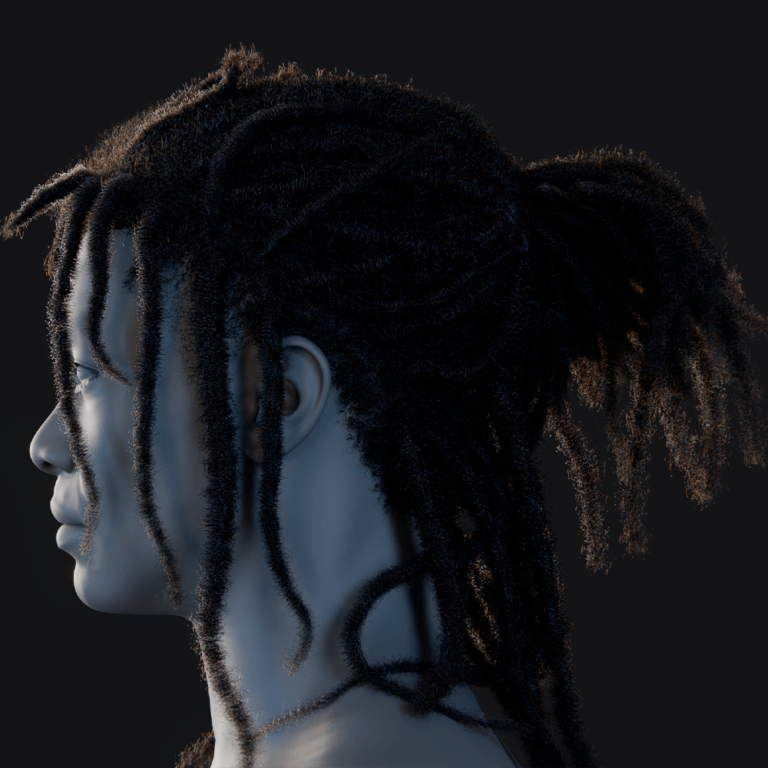

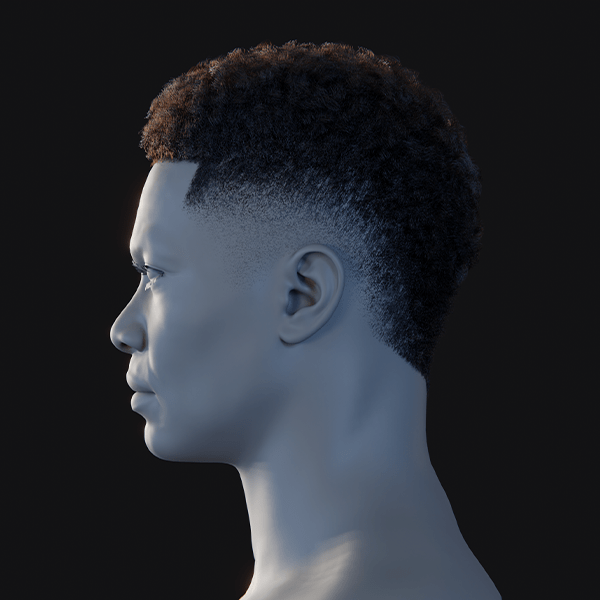

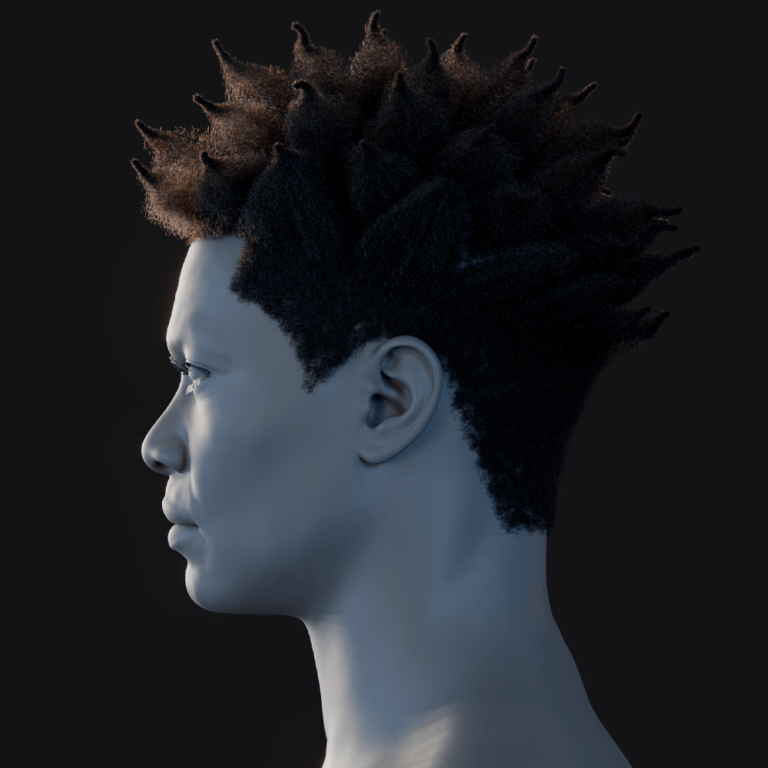

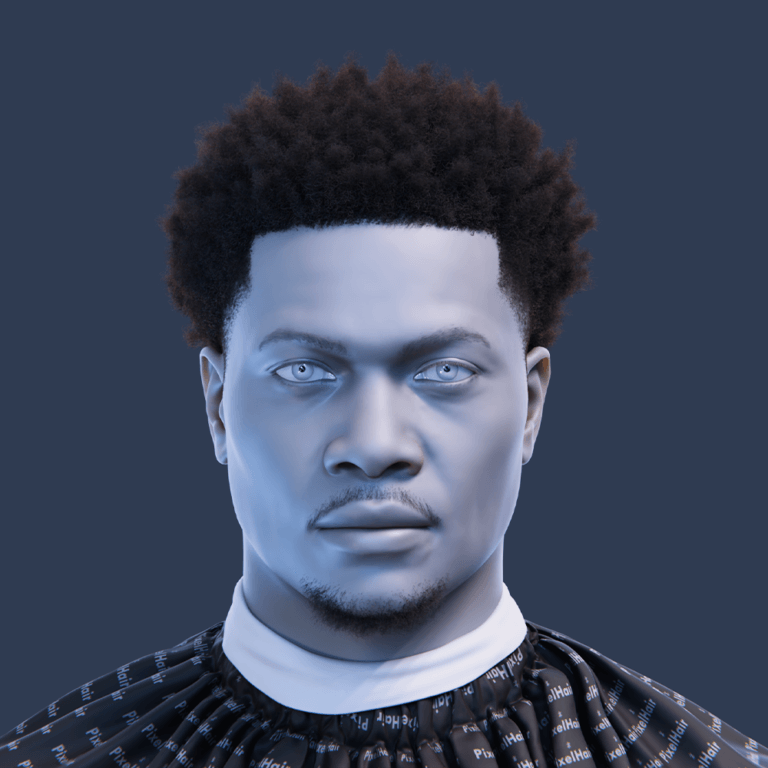

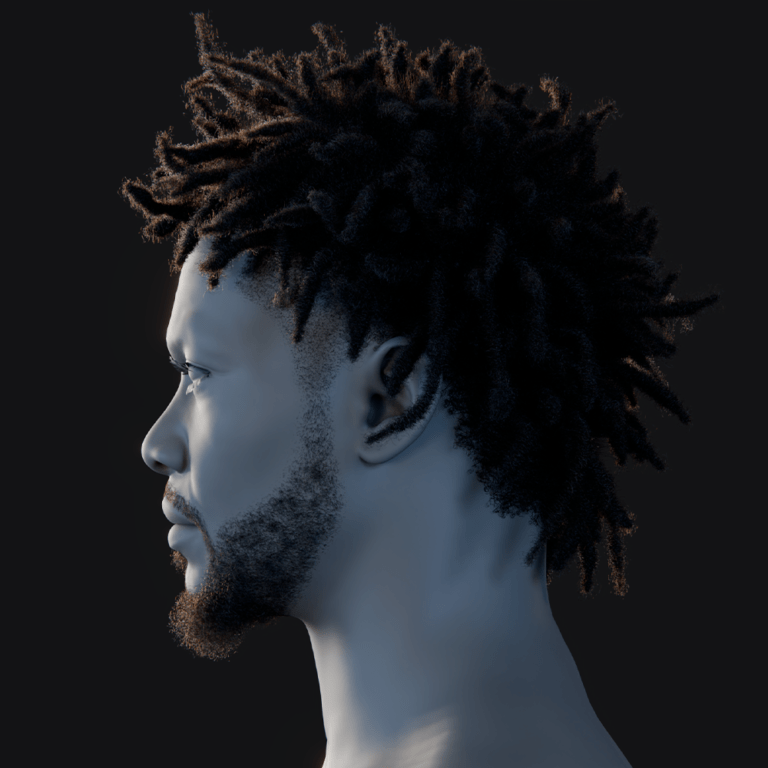

- Exporting Custom MetaHuman Grooms from Maya XGen to Unreal Engine: A Comprehensive Guide

- Apex Legends Plan With Two New Characters: The Road Ahead 2026 Roadmap (Season 29 Skirmisher + Season 32 New Legend)

- When Is PS6 Coming Out, How Much Will the PS6 Cost, and Will PS6 Be Backwards Compatible? (2026 Update)

- Resident Evil Requiem Includes A Surprising Homage To The Lord Of The Rings Movies — Fans Spot Hidden Shelob-Inspired Scene

- Metahuman Creator in Unreal Engine: How to Build High-Fidelity Digital Humans Step by Step

- Maxon Adds Real-Time Renderer Redshift Live to Redshift 2026.4: Features, Workflow Benefits, and What It Means for 3D Artists

- Sony’s Spider-Man Universe Headed For Reboot After Disastrous Morbius, Madame Web, And Kraven Movies: What Tom Rothman Confirmed