The rapid growth of generative AI has created powerful tools for image editing, but it has also enabled harmful use cases—most notably so-called “nudify” or “undress” apps. Recent findings suggest that these apps are surfacing in major app marketplaces, raising serious concerns about platform moderation, user safety, and legal accountability. This article examines how these apps are appearing on major platforms, what risks they pose, and what users can do to stay protected.

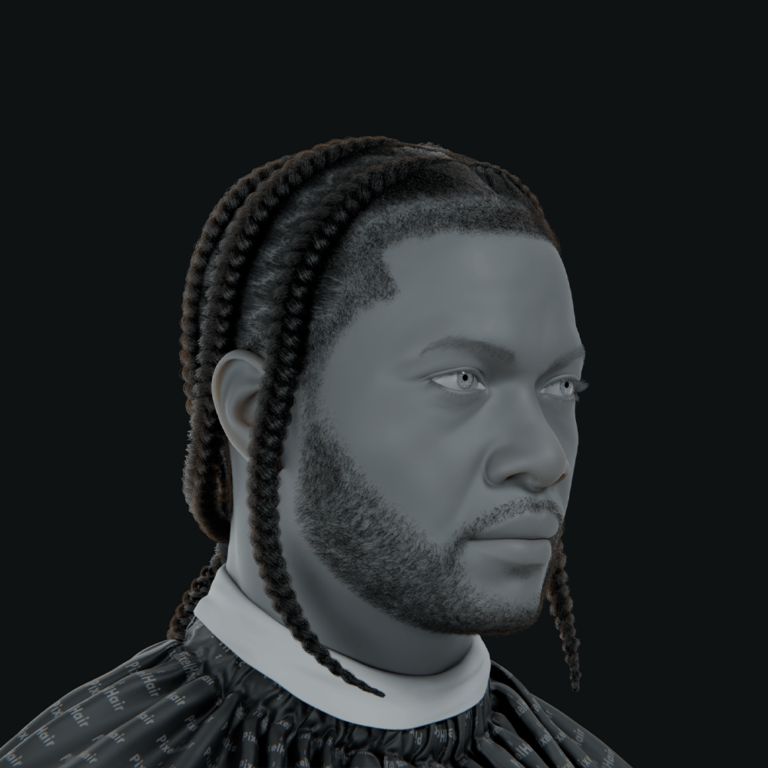

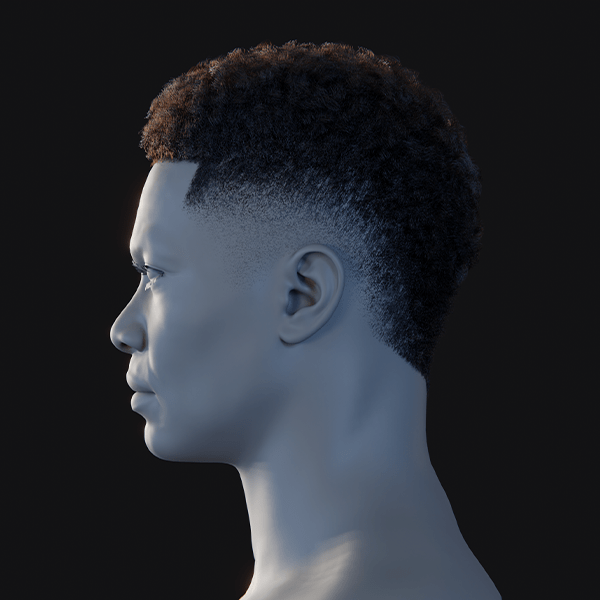

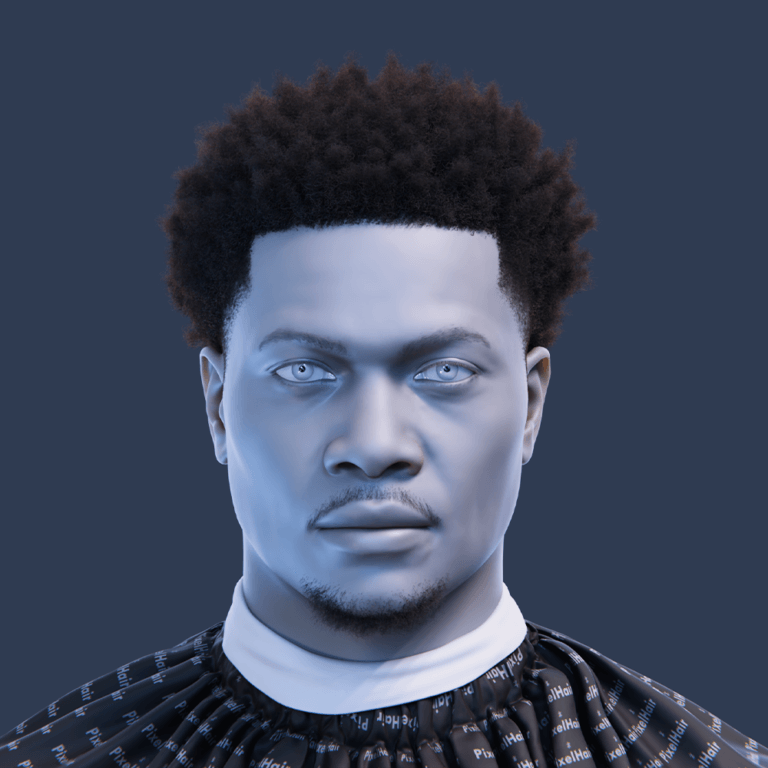

Apple App Store “nudify” apps showing up in search results

Reports indicate that searches on the Apple App Store can return apps that claim to digitally “remove clothing” from images. These apps may not explicitly use prohibited language in titles or descriptions but often rely on suggestive keywords and metadata to appear in search results.

Some of these apps disguise their functionality behind broader labels like “AI photo editor” or “image enhancer,” making it harder for moderation systems to flag them. As a result, users searching for legitimate editing tools may inadvertently encounter apps that promote unethical or abusive use cases.

Google Play Store “nudify” apps promoted through search and ads

On the Google Play Store, similar concerns have emerged. Certain apps reportedly appear not only in organic search results but also in paid placements via app install ads.

Because Google’s advertising ecosystem allows developers to bid on keywords, apps with questionable functionality can gain visibility if they comply superficially with ad policies while promoting harmful capabilities externally (e.g., via linked websites or in-app purchases).

What are “nudify” apps and how do they work?

“Nudify” apps are AI-powered tools designed to generate fake nude or semi-nude images from clothed photos. They typically rely on deep learning models trained on large datasets of human bodies.

These apps often use:

- Image-to-image generative AI models

- Body segmentation and reconstruction techniques

- Synthetic skin texture generation

The result is not a real image but a fabricated output that can appear convincing enough to mislead or harm individuals.

AI “undress” apps and the rise of nonconsensual deepfake porn

The emergence of these tools is closely tied to the broader rise of deepfake technology. Nonconsensual deepfake pornography involves generating explicit content featuring a person without their consent.

This trend has escalated due to:

- Increased accessibility of AI tools

- Lower technical barriers for users

- Monetization through subscriptions or credits

Victims often women and minors face reputational damage, harassment, and psychological harm.

Tech Transparency Project report on Apple and Google nudify apps (2026)

A 2026 investigation by the Tech Transparency Project found that both major app stores hosted or promoted apps capable of generating explicit deepfake images.

The report highlighted:

- Dozens of apps with “nudify” capabilities

- Millions of downloads across platforms

- Inconsistent enforcement of platform policies

It also noted that some apps bypass detection by offloading explicit features to external servers or web portals.

How App Store Search Ads can boost “nudify” and “undress” app downloads

Apple’s Search Ads platform allows developers to promote apps at the top of search results. While ads are reviewed, enforcement gaps can allow questionable apps to slip through.

These ads can:

- Target keywords related to photo editing or AI

- Appear as top results, increasing visibility

- Drive high download volumes quickly

This creates a financial incentive for developers to exploit loopholes in moderation systems.

Why app store moderation is struggling to remove nudify tools

Moderation challenges stem from several factors:

- Apps hiding explicit functionality behind generic interfaces

- Rapid updates that change app behavior after approval

- Use of external APIs to deliver restricted features

Additionally, automated review systems may fail to detect nuanced or context-dependent violations, while human review cannot scale to match submission volume.

Apple App Store rules on nonconsensual sexual content and deepfakes

Apple’s guidelines prohibit apps that include:

- Nonconsensual sexual content

- Pornographic material

- Defamatory or abusive content

Apps found violating these rules can be removed, and developer accounts may be terminated. However, enforcement depends on detection and reporting.

Google Play policies on sexual content, deepfakes, and harassment

Google Play policies similarly ban:

- Sexually explicit content

- Apps that promote harassment or exploitation

- Deepfake tools used for harm

Google also restricts misleading claims and requires transparency in app functionality, though enforcement remains inconsistent.

How to report nudify apps on iPhone (App Store reporting steps)

To report a suspicious app on iPhone:

- Open the App Store listing

- Scroll to the bottom of the page

- Tap “Report a Problem”

- Sign in with your Apple ID

- Select the issue (e.g., harmful or abusive content)

- Submit details

Users can also report apps via Apple’s official support website.

How to report nudify apps on Android (Google Play reporting steps)

To report an app on Android:

- Open the Google Play Store

- Navigate to the app page

- Tap the three-dot menu

- Select “Flag as inappropriate”

- Choose the relevant category

- Submit the report

Reports help improve moderation and can trigger review actions.

How to protect yourself from AI deepfake nude scams and extortion

To stay safe:

- Avoid sharing personal images with unknown platforms

- Use reverse image search to detect misuse

- Enable strong privacy settings on social media

- Be cautious of apps requesting unnecessary permissions

If targeted, document evidence and report incidents to authorities and platforms immediately.

Parental controls to block explicit AI apps on iPhone and Android

Parents can reduce exposure by enabling controls:

On iPhone:

- Use Screen Time

- Restrict app downloads by age rating

- Block explicit content

On Android:

- Enable Google Family Link

- Set content filters in Play Store

- Monitor app activity

These tools help prevent minors from accessing harmful apps.

Are nudify apps illegal? New laws and enforcement trends in 2026

Legal frameworks are evolving rapidly. In 2026:

- Several regions have criminalized nonconsensual deepfake porn

- Laws increasingly target both creators and distributors

- Platforms face pressure to enforce stricter compliance

However, legality varies by jurisdiction, and enforcement can be inconsistent.

Safer AI photo editor alternatives with anti-nudification safeguards

Not all AI photo editors are harmful. Many legitimate tools include safeguards against misuse.

Safer alternatives typically:

- Restrict explicit content generation

- Use content moderation filters

- Provide transparent AI usage policies

Users should choose apps from reputable developers with clear ethical guidelines.

Frequently Asked Questions (FAQs)

- What is a nudify app?

- A nudify app uses AI to generate fake nude images from clothed photos.

- Are nudify apps real or scams?

- Some are functional, but many are scams designed to collect money or data.

- Can nudify apps be removed from app stores?

- Yes, but removal depends on detection and enforcement of policies.

- Are these apps legal to use?

- Legality varies, but using them to create nonconsensual images can be illegal.

- Can someone use my photos without permission?

- Yes, which is why privacy and caution online are critical.

- How do I report a deepfake image?

- Report it to the platform hosting the content and relevant authorities.

- Do Apple and Google allow these apps?

- Both prohibit harmful content, but enforcement gaps exist.

- Can parental controls block these apps?

- Yes, using built-in tools on iOS and Android.

- Are all AI photo editors dangerous?

- No, many are safe and include strict safeguards.

- What should I do if I’m targeted?

- Document evidence, report the incident, and seek legal advice if necessary.

Conclusion

The emergence of nudify apps highlights a growing challenge in the AI era: balancing innovation with safety and ethical responsibility. While Apple and Google have policies in place to prevent harmful applications, gaps in enforcement allow some tools to slip through. Users must remain vigilant, leverage reporting tools, and prioritize privacy to mitigate risks. At the same time, continued regulatory pressure and improved moderation systems will be essential to address this evolving threat.

Sources and citation

- Tech Transparency Project report on nudify apps (2026)

https://www.techtransparencyproject.org - Apple App Store Review Guidelines

https://developer.apple.com/app-store/review/guidelines/ - Google Play Developer Policy Center

https://play.google.com/about/developer-content-policy/ - Reporting a problem with an app (Apple Support)

https://support.apple.com - Google Play reporting and enforcement policies

https://support.google.com/googleplay

Recommended

- Among Us Developers’ Indie Game Fund Outersloth Reveals Contract Terms: 15% Revenue Share After Recoup

- AdonisFX 2.0 Brings Full Support for Houdini: Features, Pricing, and Workflow Benefits

- Koshmar: The Last Reverie Preview – Fast-Paced Action-Adventure With a Mass Effect-Like Morality System

- Wuthering Waves – Xbox Release Date Trailer | Xbox Partner Preview Showcase 2026: July 2026 Release, Game Pass, and Xbox Play Anywhere

- Jeff Kaplan on the Reasons for Leaving Blizzard: What He Said About Corporate Pressure, Overwatch League, and His Breaking Point

- A Quiet Place: Part III Cast Update: Jack O’Connell Joins as Emily Blunt and Cillian Murphy Return

- How do I add a background image to the camera view in Blender?

- PlayStation Portal Guide (2026): Setup, Remote Play Tips, Cloud Streaming, and Troubleshooting

- How to Export Metahuman to Maya: A Step-by-Step Guide for Seamless Integration and Customization

- KeyShot releases KeyShot Studio 2026.1: new features, release notes, and system requirements